Zanders Testing Framework

Fail Fast, Fail Early, Fail Often

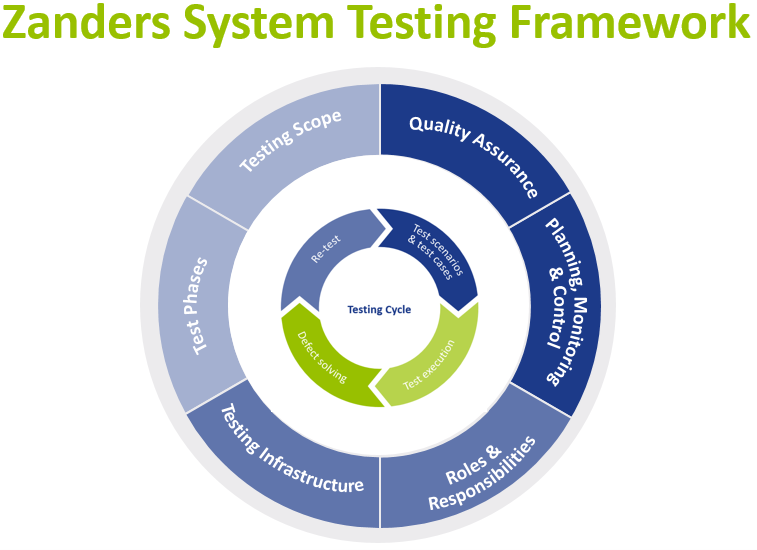

Testing is a critical activity in any implementation, and it has a significant impact in any overall project success. However, testing often takes longer than planned, due to poor execution or unexpected issues that arise in a later project stage. Such problems usually occur when not all the variables necessary for a successful test journey have been considered.

To avoid these issues, we have defined a testing framework to help you identify an optimal testing strategy and approach. The framework depicts building blocks that need to be present and considered for effective and successful system testing where project size, project scope, project approach and resource availability need to be taken into consideration.

This article further describes each of these building blocks and it will outline factors and principles that help to achieve effective testing and result in a sound system implementation.

Testing Strategy – a top-down approach

Before starting to look at each area of the testing framework, the testing strategy needs to be defined. The strategy, based on project size and scope, should determine what the testing key principles are:

- High level scope

- Proposed phases and duration

- Business engagement and involvement

- Testing governance model

- Key benefits and risks mitigated by each testing phase

- When and how to determine exit criteria’s

The testing strategy will then underpin the detailed plan and definition of each one of our testing building blocks.

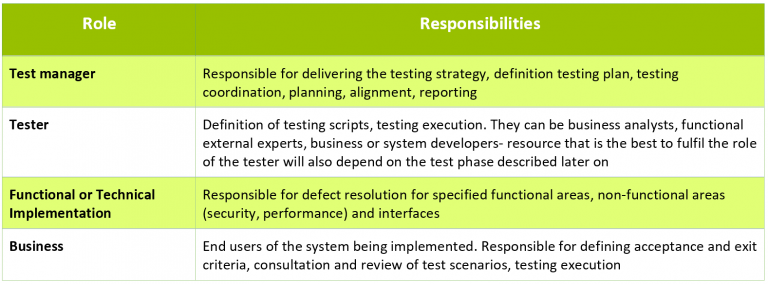

Roles & Responsibilities

Clear establishment of roles and responsibilities (RACI matrix) is key to the effective testing. This ensures that the right profiles are assigned to each area, and that there is clear ownership for each deliverable. This is key to have a realistic estimation of effort and plan. Some examples of roles and responsibilities include:

In smaller scale projects, the above roles may be combined under the same resource, while in larger projects more resources will be needed to fulfil a single role. One of the key principles with regards to resourcing is the early involvement of business in testing. Early involvement of the business in testing provides the following benefits:

- Focus on business value items and critical areas, which ultimately saves cost and time and ensures early risk mitigation.

- Continuous improvement and assurance that project’s overall objective and business case is met.

- Business embraces the process and organizational changes faster.

- Critical areas are tested sooner, and critical issues are solved earlier.

- Establishment of the business ownership of the system being implemented.

Planning, Monitoring & Control

Plans need to lay down testing scope (test scenarios and test cases), number and type of resources and time needed for preparation and execution for each test phase. It should also take into consideration the system development stage and other entry criteria, as well as an estimate of the defects and defect resolution time.

Around monitoring and control it is essential that test execution itself is administered in a consistent manner. There are software solutions that can support the whole testing process and its administration. In any case, the progress of testing and the status of the progress of defect handling need to be trackable. At a minimum, it is recommended to track and gather evidence for the following:

- Test scenarios and test cases, including testers and timelines.

- Overview of defects, including root cause, severity classification, resolution timelines and person responsible for fixing the defect.

- Test execution evidence, including actual execution timelines, pass/fail status, screenshots, and other evidence.

It is also important to report daily on the progress of each test phase, as this enables project managers to mitigate deviations from the plan in the timely manner. Progress reporting will provide transparency, and it helps to increase confidence of the involved stakeholders.

Testing Scope

Testing should follow predetermined test scenarios and test cases. Test scenarios describe what functionality of the system is to be tested (e.g. input FX trade request, approve FX trade request). Test cases describe variations that should be tested, such as deal types or events (FX spot trade, FX forward trade, unwind, for example).

All test scenarios and test cases should be linked to the initial business requirements and should be created, reviewed, and approved by the business. Business can help identifying value items and risk areas that should take priority for testing. This allows priority and risk to be assigned to different functionalities, processes, and test scenarios.

Quality Assurance

Quality assurance while testing is two-fold. The project should assure both the quality of the system being implemented, as well as the quality of the test execution.

Essentially, testing activity validates whether what was developed provides an adequate outcome. Expected results are based on the requirements laid out in the system design documentation. It is important that business is involved in definition and review of the expected result.

Setting the quality for testing execution involves defining relevant criteria. First, entry criteria are set before each test phase, such as completion of the (piece of) system development, environment setup, access to the environment for testers and availability of relevant data. Second, exit criteria need to be established, which will determine when the test phase can be successfully exited.

Furthermore, acceptable variances and thresholds (e.g. legacy system vs new system portfolio valuations due to different valuation methodologies or rounding differences) need to be defined with the business beforehand and included in the expected results and acceptance criteria.

Testing Infrastructure

In any system implementation, it is standard to operate with at least three system environments. It is also common to deploy a multiple test environment, e.g. an environment for the ST/SIT testing and a test environment dedicated to UAT test phase.

Test automation should be included, or at least considered, in any larger system implementation projects. When selecting processes to automate testing we look for:

- Repetitive tests (e.g. deal capture, master data creation/change/deletion).

- Tests that tend to cause human error (e.g. market rate manual upload).

- Tests that require multiple data sets (e.g. master data uploads).

- Frequently used functionality that introduces high risk conditions (e.g. payment runs).

- Tests that are impossible to perform manually (e.g. performance and non-functional requirements tests).

When analyzing what to automate, it is very important to take a strategic and broad view. If testing of some processes or functionalities can be automated, it is probable that after system deployment these processes or functionalities can also be automated in day-to-day execution.

Most of the existing tools that can be used for automation are not expensive and in some cases in-house expertise can be used (e.g. RPA). Examples of such solutions are mentioned below:

- Robotic Process Automation (RPA) for process testing and validation.

- Standard automation testing tools (e.g. Selenium, TestComplete) for functional and non-functional testing.

- Non-functional Requirements and performance testing tools (e.g. Splunk).

Some treasury management systems have also started to provide their own tools, embedded in their systems (e.g, scripting).

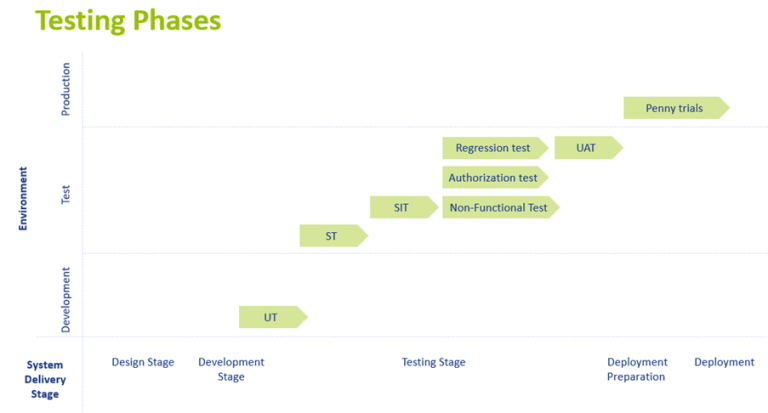

Testing Phases

There are several unique testing phases with specific objectives. The figure below shows these testing phases across time (system delivery stage) and illustrates in what system environment the testing should be performed. This figure is based on the conventional Waterfall (phased) project approach.

UT (Unit Test)

This is a first test performed and it follows straight after completion of system configuration and setup. The purpose of the UT is to initially confirm both technical and functional requirements. It tests a functionality on a stand-alone basis.

ST (System Test)

System Testing focuses on a combination of unit tests (testing several build components) in a specific process flow and it is performed in the test environment. For example, for back-to-back FX dealing, ST will consist of FX trade request input, trade request approval, FX trade execution, deal creation and mirroring, payment request creation and accounting postings.

SIT (System Integration Test)

The purpose of SIT is to test end-to-end processes including interfaces with connected systems and applications. SIT is also performed in the test environment and it requires availability of the test environments from the connected systems and applications.

Authorizations test

Most of the systems will require authorization setup for different system users, next to the workflow configuration. Authorizations determine what activities a specific user can perform in the system.

Non-Functional Test

Non-Functional Testing is a technical test, typically executed by technical resource. It tests system performance, security and other non-functional requirements.

UAT (User Acceptance Test)

UAT is the one of the last tests before technical and business go-live. It is a functional test, and it should be executed by the key users of the system. Generally, this is the longest test period. UAT test scenarios include workflow testing, authorization testing, negative test cases and business continuity plan activities. This test phase also includes the largest variation of test cases.

Penny trials phase

This testing phase is mostly applicable when the project also includes commercial or trade settlements. Penny trials are part of the system deployment preparation and they are performed in the production ‘live’ environment. The actual end to end deal process including payment and confirmation will be executed with low value transactions (e.g. a $10 spot deal).

Parallel Run

It can be beneficial to operate a system in the parallel run mode at least for some period. Parallel run basically replicates day-to-day activities in the system being implemented in parallel to the legacy system and processes.

Adopting System Testing Framework in Agile Projects

In recent years, organizations have increasingly adopted an agile project approach for system implementation. An agile approach would split system development in smaller products that could be incrementally deployed (in a production or testing environment).

System testing framework can be and should be adopted in the agile or mixed project approach as well. Most of the building blocks for successful testing described here are agnostic to the project approach given, some tailoring of the specific factors to the format of agile approach is executed. As system delivery is split into smaller deployable products in agile approach, test phases should follow the same design. That means that instead of a single SIT period, SIT would be executed after completion of each incremental system development (e.g. back-to-back dealing). Nevertheless, it is notable to mention that in agile projects there needs to also be several testing phases, tested by different resources.

Conclusion: Failure is success in progress

There is no one-size-fits-all around testing. The project size and scope, resource availability, internal and external skillsets and project methodology will determine how to approach each testing phase. Nevertheless, having the right system testing framework to guide you through a project is essential to any successful project. This will ensure that you fail fast, early, often during early testing phases, reducing go live delivery and operational risks.