Blog

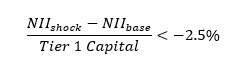

Ensuring Robust Controls and Checks in SAP TRM with a Kaizen Approach

This article is intended for finance, risk, and compliance professionals with business and system integration knowledge of SAP, but also includes contextual guidance for broader audiences. 1.

Find out more