Redefining Credit Portfolio Strategies: Balancing Risk & Reward in a Volatile Economy

This article delves into a three-step approach to portfolio optimization by harnessing the power of advanced data analytics and state-of-the-art quantitative models and tools.

In today's dynamic economic landscape, optimizing portfolio composition to fortify against challenges such as inflation, slower growth, and geopolitical tensions is ever more paramount. These factors can significantly influence consumer behavior and impact loan performance. Navigating this uncertain environment demands banks adeptly strike a delicate balance between managing credit risk and profitability.

Why does managing your risk reward matter?

Quantitative techniques are an essential tool to effectively optimize your portfolio’s risk reward profile, as this aspect is often based on inefficient approaches.

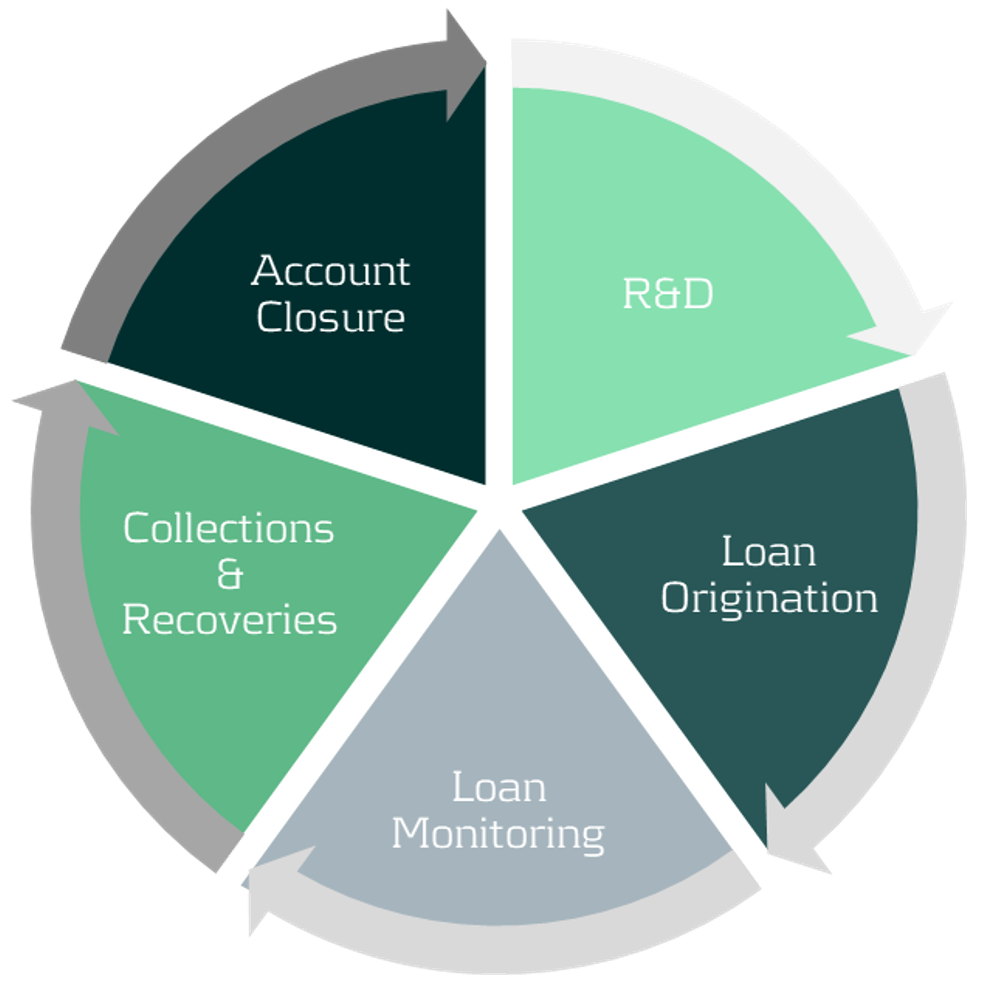

Existing models and procedures across the credit lifecycle, especially those relating to loan origination and account management, may not be optimized to accommodate current macro-economic challenges.

Figure 1: Credit lifecycle.

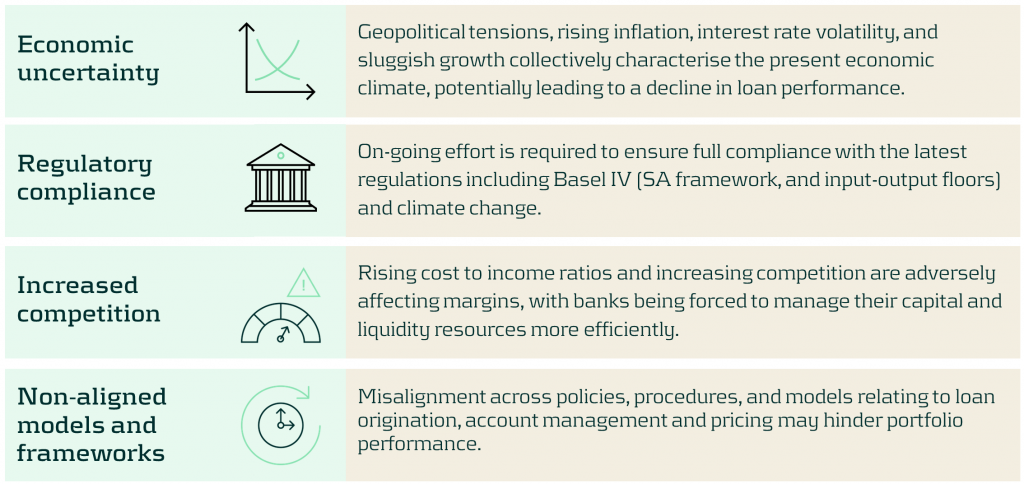

Current challenges facing banks

Some of the key challenges banks face when balancing credit risk and profitability include:

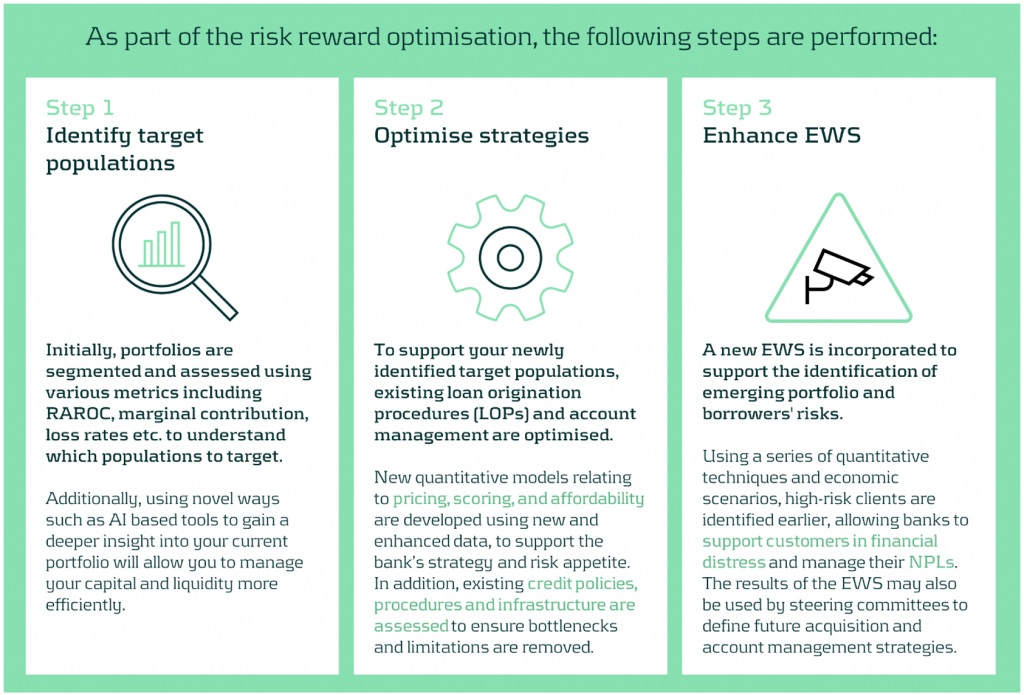

Our approach to optimizing your risk reward profile

Our optimization approach consists of a holistic three step diagnosis of your current practices, to support your strategy and encourage alignment across business units and processes.

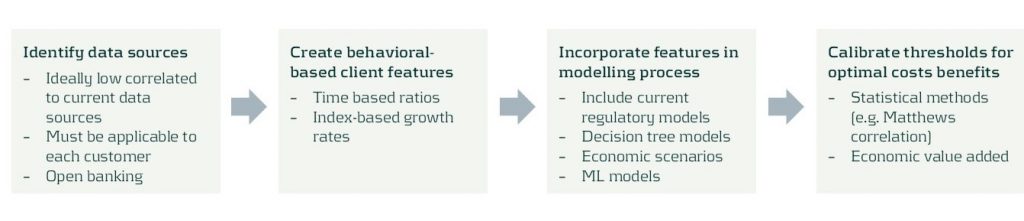

The initial step of the process involves understanding your current portfolio(s) by using a variety of segmentation methodologies and metrics. The second step implements the necessary changes once your primary target populations have been identified. This may include reassessing your models and strategies across the loan origination and account management processes. Finally, a new state-of-the-art Early Warning System (EWS) can be deployed to identify emerging risks and take pro-active action where necessary.

A closer look at redefining your target populations

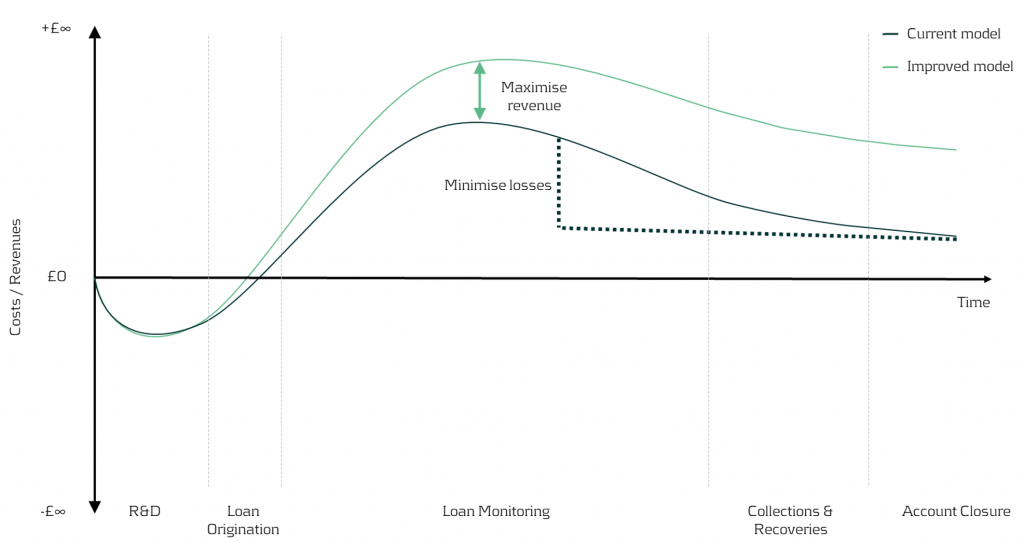

With the proliferation of advanced data analytics, banks are now better positioned to identify profitable, low-risk segments. Machine Learning (ML) methodologies such as k-means clustering, neural networks, and Natural Language Processing (NLP) enable effective customer grouping, behavior forecasting, and market sentiment analysis.

Risk-based pricing remains critical for acquisition strategies, assessing segment sensitivity to different pricing strategies, to maximize revenue and reduce credit losses.

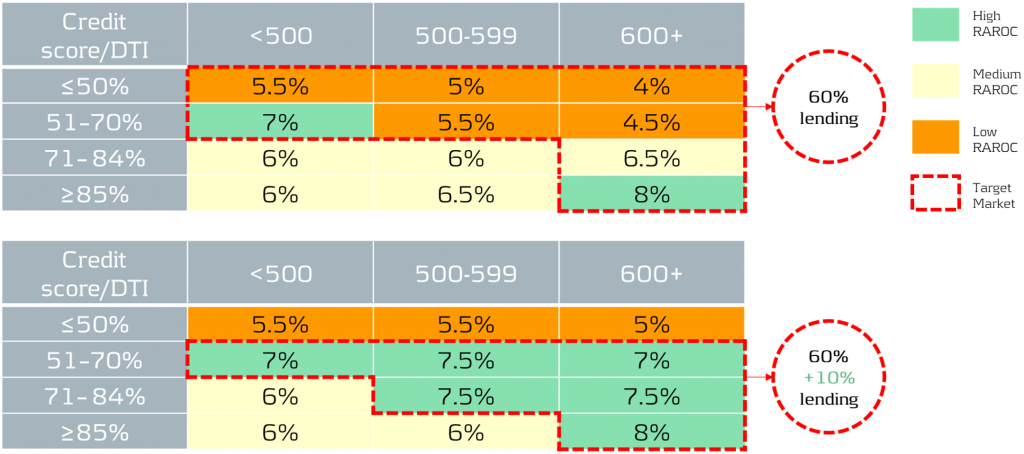

Figure 2: In the illustration above, we can visually see the impact on earnings throughout the credit lifecycle driven by redefining the target populations and application of different pricing strategies.

In our simplified example, based on the RAROC metric applied to an unsecured loans portfolio, we take a 2-step approach:

1- Identify target populations by comparing RAROC across different combinations of credit scores and debt-to-income (DTI) ratios. This helps identify the most capital efficient segments to target.

2- Assess the sensitivity of RAROC to different pricing strategies to find the optimal price points to maximize profit over a select period - in this scenario we use a 5-year time horizon.

Figure 3: The top table showcases the current portfolio mix and performance, while the bottom table illustrates the effects of adjusting the pricing and acquisition strategy. By redefining the target populations and changing the pricing strategy, it is possible to reallocate capital to the most profitable segments whilst maintaining within credit risk appetite. For example, 60% of current lending is towards a mix of low to high RAROC segments, but under the new proposed strategy, 70% of total capital is allocated to the highest RAROC segments.

Uncovering risks and seizing opportunities

The current state of Early Warning Systems

Many organizations rely on regulatory models and standard risk triggers (e.g., no. of customers 30 day past due, NPL ratio etc.) to set their EWS thresholds. Whilst this may be a good starting point, traditional models and tools often miss timely deteriorations and valuable opportunities, as they typically use limited and/or outdated data features.

Target state of Early Warning Systems

Leveraging timely and relevant data, combined with next-generation AI and machine learning techniques, enables early identification of customer deterioration, resulting in prompt intervention and significantly lower impairment costs and NPL ratios.

Furthermore, an effective EWS framework empowers your organization to spot new growth areas, capitalize on cross-selling opportunities, and enhance existing strategies, driving significant benefits to your P&L.

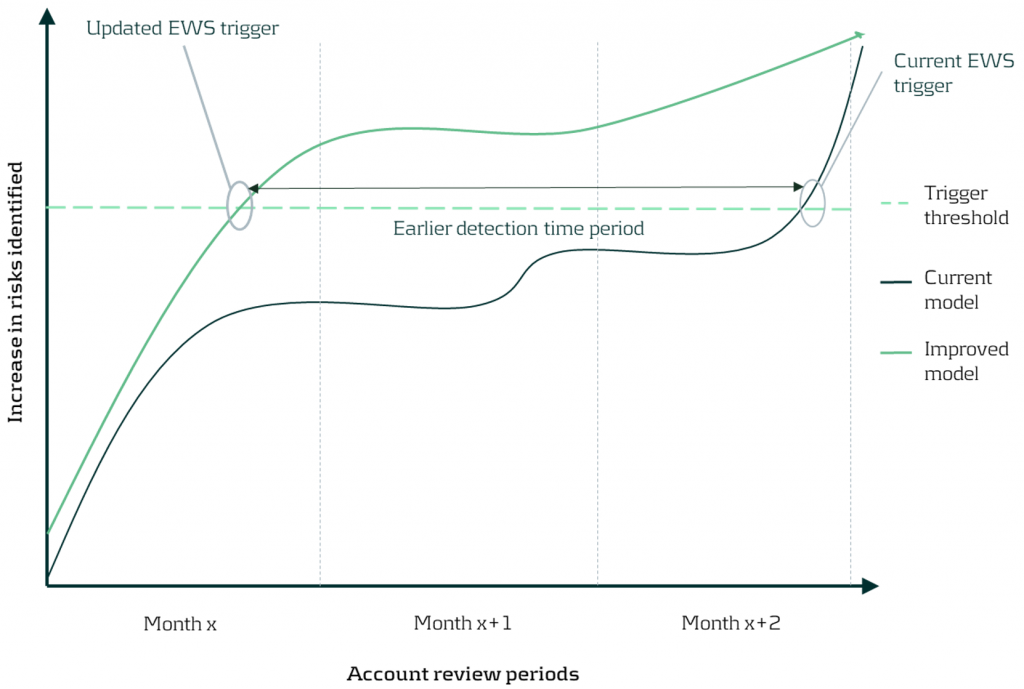

Figure 4: By updating the early warning triggers using new timely data and advanced techniques, detection of customer deterioration can be greatly improved enabling firms to proactively support clients and enhance the firm’s financial position.

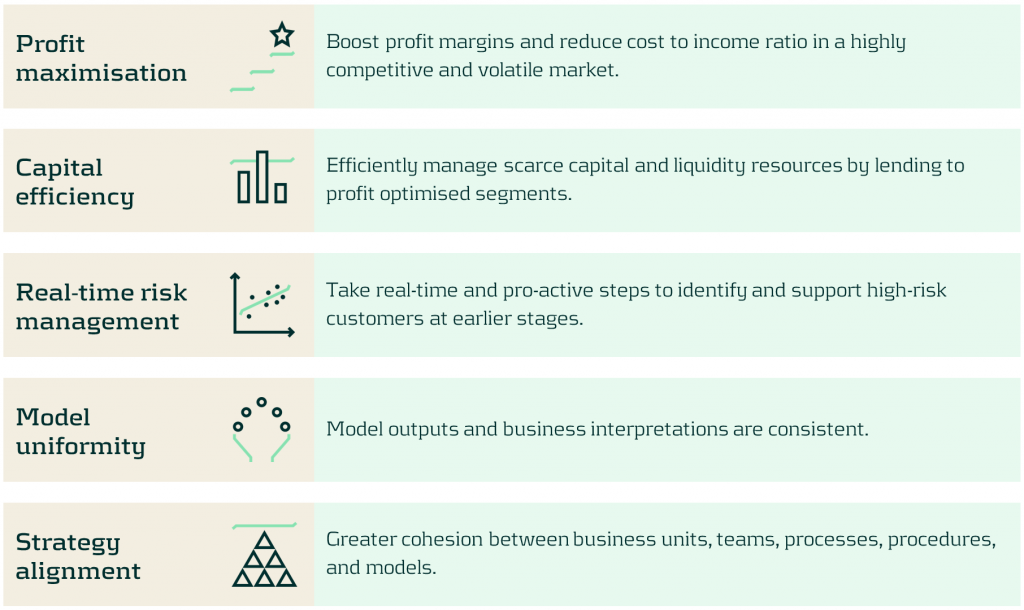

Discover the benefits of optimizing your portfolios

Discover the benefits in optimizing your portfolios’ risk-reward profile using our comprehensive approach as we turn today’s challenges into tomorrow’s advantages. Such benefits include:

Conclusion

In today's rapidly evolving market, the need for sophisticated credit risk portfolio management is ever more critical. With our comprehensive approach, banks are empowered to not merely weather economic uncertainties, but to thrive within them by striking the optimal risk-reward balance. Through leveraging advanced data analytics and deploying quantitative tools and models, we help institutions strategically position themselves for sustainable growth, and comply with increasing regulatory demands especially with the advent of Basel IV. Contact us to turn today’s challenges into tomorrow’s opportunities.

For more information on this topic, contact Martijn de Groot (Partner) or Paolo Vareschi (Director).

Savings modelling series – How to determine core non-maturing deposit volume?

Low interest rates, decreasing margins and regulatory pressure: banks are faced with a variety of challenges regarding non-maturing deposits. Accurate and robust models for non-maturing deposits are more important than ever. These complex models depend largely on a number of modelling choices. In the savings modelling series, Zanders lays out the main modelling choices, based on our experience at a variety of Tier 1, 2 and 3 banks.

Identifying the core of non-maturing deposits has become increasingly important for European banking Risk and ALM managers. This is especially true for retail banks whose funding mostly comprises deposits. The last years, the concept of core deposits was formalized by the Basel Committee and included in various regulatory standards. European regulators consider a disclosure requirement of the core NMD portion to regulators and possibly to public stakeholders. Despite these developments, a lot of banks still wonder: What is core deposits and how do I identify them?

FINDING FUNDING STABILITY: CORE PORTION OF DEPOSITS

Behavioural risk profiles for client deposits can be quite different per bank and portfolio. A portion of deposits can be stable in volume and price where other portions are volatile and sensitive to market rate changes. Before banks determine the behavioural (investment) profile for these funds, it should be analysed which deposits are suitable for long-term investment. This portion is often labelled as core deposits.

Basel standards define core deposits as balances that are highly likely to remain stable in terms of volume and are unlikely to reprice after interest rate changes. Behaviour models can vary a lot between (or even within) banks and are hard to compare. A simple metric such as the proportion of core deposits should make a comparison easier. The core breakdown alone should be sufficient to substantiate differences in the investment and risk profiles of deposits.

"A good definition of core deposit volume is tailored to banks’ deposit behavioural risk model."

Regulatory guidelines do not define the exact confidence level and horizon used for core analysis. Therefore banks need to formulate an interpretation of the regulatory guidance and set the assumptions on which their analysis is based. A good definition of core deposit volume is tailored to banks’ deposit behavioural risk model. Ideally, the core percentage can be calculated directly from behavioural model parameters. ALM and Risk managers should start with the review of internal behavioural models: how are volume and pricing stability modelled and how are they translated into investment restrictions?

SAVINGS MODELLING SERIES

This short article is part of the Savings Modelling Series, a series of articles covering five hot topics in NMD for banking risk management. The other articles in this series are:

Savings modelling series – Calibrating models: historical data or scenario analysis?

Low interest rates, decreasing margins and regulatory pressure: banks are faced with a variety of challenges regarding non-maturing deposits. Accurate and robust models for non-maturing deposits are more important than ever. These complex models depend largely on a number of modelling choices. In the savings modelling series, Zanders lays out the main modelling choices, based on our experience at a variety of Tier 1, 2 and 3 banks.

One of the puzzles for Risk and ALM managers at banks the last years has been determining the interest rate risk profile of non-maturing deposits. Banks need to substantiate modelling choices and parametrization of the deposit models to both internal and external validation and regulatory bodies. Traditionally, banks used historically observed relationships between behavioural deposit components and their drivers for the parametrization. Because of the low interest rate environment and outlook, historic data has lost (part of) its forecasting power. Alternatives such as forward-looking scenario analysis are considered by ALM and Risk functions, but what are the important focus points using this approach?

THE PROBLEM WITH USING HISTORICAL OBSERVATIONS

In traditional deposit models, it is difficult to capture the complex nature of deposit client rate and volume dynamics. On the one hand Risk and ALM managers believe that historical observations are not necessarily representative for the coming years. On the other hand it is hard to ignore observed behaviour, especially when future interest rates return to historic levels. To overcome these issues, model forecasts should be challenged by proper logical reasoning.

In many European markets, the degree to which customer deposit rates track market rates (repricing) has decreased over the last decade. Repricing decreased because many banks hesitate to lower rates below zero. Risk and ALM managers should analyse to what extent the historically decreasing repricing pattern is representative for the coming years and align with the banks’ pricing strategy. This discussion often involves the approval of senior management given the strategic relevance of the topic.

"Common sense and understanding deposit model dynamics are an integral part of the modelling process."

IMPROVING MODELS THROUGH FORWARD LOOKING INFORMATION

Common sense and understanding deposit model dynamics are an integral part of the modelling process (read our interview with ING experts here). Best practice deposit modelling includes forming a comprehensive set of interest rate scenarios that can be translated to a business strategy. To capture all possible future market developments, both downward and upward scenarios should be included. The slope of the interest rate scenarios can be adjusted to reflect gradual changes over time, or sudden steepening or flattening of the curve. Pricing experts should be consulted to determine the expected deposit rate developments over time for each of the interest rate scenarios. Deposit model parameters should be chosen in such a way that its estimations on average provide a best fit for the scenario analysis.

When going through this process in your own organisation, be aware that the effects of consulting pricing experts go both ways. Risk and ALM managers will improve deposit models by using forward-looking business opinion and the business’ understanding of the market will improve through model forecasts.

SAVINGS MODELLING SERIES

This short article is part of the Savings Modelling Series, a series of articles covering five hot topics in NMD for banking risk management. The other articles in this series are:

ECL calculation methodology

Credit Risk Suite – Expected Credit Losses Methodology article

INTRODUCTION

The IFRS 9 accounting standard has been effective since 2018 and affects both financial institutions and corporates. Although the IFRS 9 standards are principle-based and simple, the design and implementation can be challenging. Specifically, the difficulties that the incorporation of forward-looking information in the loss estimate introduces should not be underestimated. Using our hands-on experience and over two decades of credit risk expertise of our consultants, Zanders developed the Credit Risk Suite. The Credit Risk Suite is a calculation engine that determines transaction-level IFRS 9 compliant provisions for credit losses. The CRS was designed specifically to overcome the difficulties that our clients face in their IFRS 9 provisioning. In this article, we will elaborate on the methodology of the ECL calculations that take place in the CRS.

An industry best-practice approach for ECL calculations requires four main ingredients:

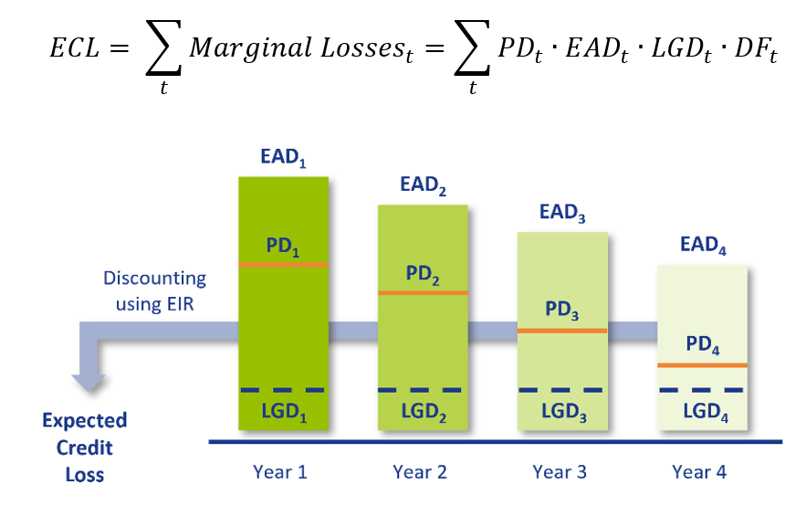

- Probability of Default (PD): The probability that a counterparty will default at a certain point in time. This can be a one-year PD, i.e. the probability of defaulting between now and one year, or a lifetime PD, i.e. the probability of defaulting before the maturity of the contract. A lifetime PD can be split into marginal PDs which represent the probability of default in a certain period.

- Exposure at Default (EAD): The exposure remaining until maturity of the contract based on current exposure, contractual, expected redemptions and future drawings on remaining commitments.

- Loss Given Default (LGD): The percentage of EAD that is expected to be lost in case of default. The LGD differs with the level of collateral, guarantees and subordination associated with the financial instrument.

- Discount Factor (DF): The expected loss per period is discounted to present value terms using discount factors. Discount factors according to IFRS 9 are based on the effective interest rate.

The overall ECL calculation is performed as follows and illustrated by the diagram below:

MODEL COMPONENTS

The CRS consists of multiple components and underlying models that are able to calculate each of these ingredients separately. The separate components are then combined into ECL provisions which can be utilized for IFRS 9 accounting purposes. Besides this, the CRS contains a customizable module for scenario-based Forward-Looking Information (FLI). Moreover, the solution allocates assets to one of the three IFRS 9 stages. In the component approach, projections of PDs, EADs and LGDs are constructed separately. This component-based setup of the CRS allows for customizable and easy to implement approach. The methodology that is applied for each of the components is described below.

PROBABILITY OF DEFAULT

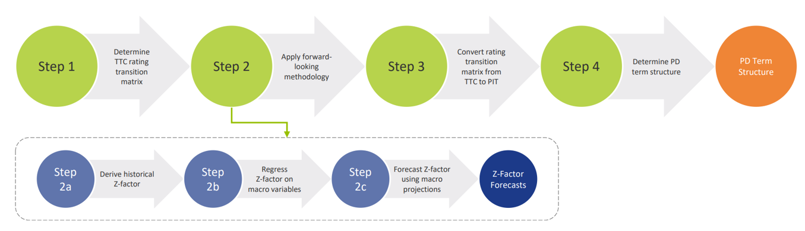

For each projected month, the PD is derived from the PD term structure that is relevant for the portfolio as well as the economic scenario. This is done using the PD module. The purpose of this module is to determine forward-looking Point-in-Time (PIT) PDs for all counterparties. This is done by transforming Through-the-Cycle (TTC) rating migration matrices into PIT rating migration matrices. The TTC rating migration matrices represent the long-term average annual transition PDs, while the PIT rating migration matrices are annual transition PDs adjusted to the current (expected) state of the economy. The PIT PDs are determined in the following steps:

- Determine TTC rating transition matrices: To be able to calculate PDs for all possible maturities, an approach based on rating transition matrices is applied. A transition matrix specifies the probability to go from a specified rating to another rating in one year time. The TTC rating transition matrices can be constructed using e.g., historical default data provided by the client or external rating agencies.

- Apply forward-looking methodology: IFRS 9 requires the state of the economy to be reflected in the ECL. In the CRS, the state of the economy is incorporated in the PD by applying a forward-looking methodology. The forward-looking methodology in the CRS is based on a ‘Z-factor approach’, where the Z-factor represents the state of the macroeconomic environment. Essentially, a relationship is determined between historical default rates and specific macroeconomic variables. The approach consists of the following sub-steps:

- Derive historical Z-factors from (global or local) historical default rates.

- Regress historical Z-factors on (global or local) macro-economic variables.

- Obtain Z-factor forecasts using macro-economic projections.

- Convert rating transition matrices from TTC to PIT: In this step, the forward-looking information is used to convert TTC rating transition matrices to point-in-time (PIT) rating transition matrices. The PIT transition matrices can be used to determine rating transitions in various states of the economy.

- Determine PD term structure: In the final step of the process, the rating transition matrices are iteratively applied to obtain a PD term structure in a specific scenario. The PD term structure defines the PD for various points in time.

The result of this is a forward-looking PIT PD term structure for all transactions which can be used in the ECL calculations.

EXPOSURE AT DEFAULT

For any given transaction, the EAD consists of the outstanding principal of the transaction plus accrued interest as of the calculation date. For each projected month, the EAD is determined using cash flow data if available. If not available, data from a portfolio snapshot from the reporting date is used to determine the EAD.

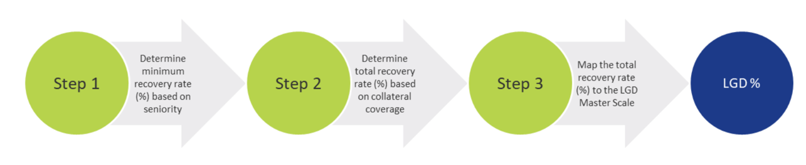

LOSS GIVEN DEFAULT

For each projected month, the LGD is determined using the LGD module. This module estimates the LGD for individual credit facilities based on the characteristics of the facility and availability and quality of pledged collateral. The process for determining the LGD consists of the following steps:

- Seniority of transaction: A minimum recovery rate is determined based on the seniority of the transaction.

- Collateral coverage: For the part of the loan that is not covered by the minimum recovery rate, the collateral coverage of the facility is determined in order to estimate the total recovery rate.

- Mapping to LGD class: The total recovery rate is mapped to an LGD class using an LGD scale.

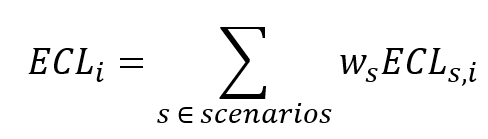

SCENARIO-WEIGHTED AVERAGE EXPECTED CREDIT LOSS

Once all expected losses have been calculated for all scenarios, the weighted average one-year and lifetime loss are calculated for each transaction , for both 1-year and lifetime scenario losses:

For each scenario , the weights are predetermined. For each transaction , the scenario losses are weighted according to the formula above, where is either the lifetime or the one-year expected scenario loss. An example of applied scenarios and corresponding weights is as follows:

- Optimistic scenario: 25%

- Neutral scenario: 50%

- Pessimistic scenario: 25%

This results in a one-year and a lifetime scenario-weighted average ECL estimate for each transaction.

STAGE ALLOCATION

Lastly, using a stage allocation rule, the applicable (i.e., one-year or lifetime) scenario-weighted ECL estimate for each transaction is chosen. The stage allocation logic consists of a customisable quantitative assessment to determine whether an exposure is assigned to Stage 1, 2 or 3. One example could be to use a relative and absolute PD threshold:

- Relative PD threshold: +300% increase in PD (with an absolute minimum of 25 bps)

- Absolute PD threshold: +3%-point increase in PD The PD thresholds will be applied to one-year best estimate PIT PDs.

If either of the criteria are met, Stage 2 is assigned. Otherwise, the transaction is assigned Stage 1.

The provision per transaction are determined using the stage of the transaction. If the transaction stage is Stage 1, the provision is equal to the one-year expected loss. For Stage 2, the provision is equal to the lifetime expected loss. Stage 3 provision calculation methods are often transaction-specific and based on expert judgement.

Rating model calibration methodology

At Zanders we have developed several Credit Rating models. These models are already being used at over 400 companies and have been tested both in practice and against empirical data. Do you want to know more about our Credit Rating models, keep reading.

During the development of these models an important step is the calibration of the parameters to ensure a good model performance. In order to maintain these models a regular re-calibration is performed. For our Credit Rating models we strive to rely on a quantitative calibration approach that is combined and strengthened with expert option. This article explains the calibration process for one of our Credit Risk models, the Corporate Rating Model.

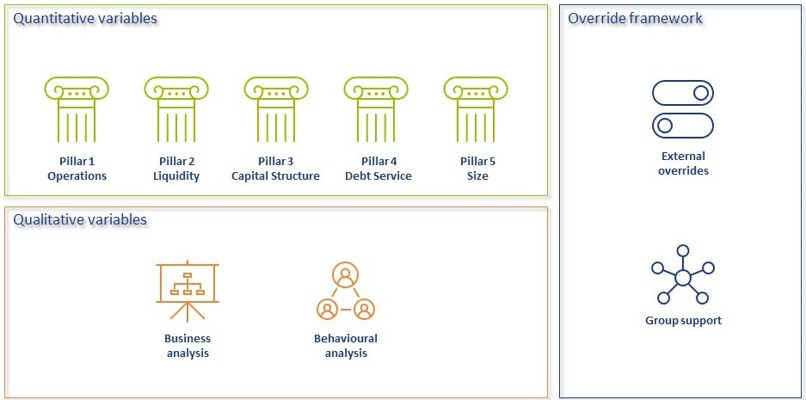

In short, the Corporate Rating Model assigns a credit rating to a company based on its performance on quantitative and qualitative variables. The quantitative part consists of 5 financial pillars; Operations, Liquidity, Capital Structure, Debt Service and Size. The qualitative part consist of 2 pillars; Business Analysis pillar and Behavioural Analysis pillar. See A comprehensive guide to Credit Rating Modelling for more details on the methodology behind this model.

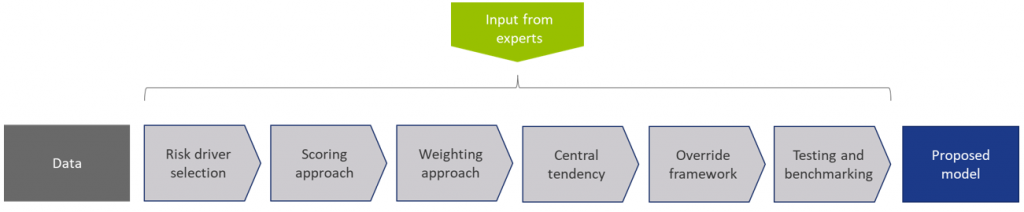

The model calibration process for the Corporate Rating Model can be summarized as follows:

Figure 1: Overview of the model calibration process

In steps (2) through (7), input from the Zanders expert group is taken into consideration. This especially holds for input parameters that cannot be directly derived by a quantitative analysis. For these parameters, first an expert-based baseline value is determined and second a model performance optimization is performed to set the final model parameters.

In most steps the model performance is accessed by looking at the AUC (area under the ROC curve). The AUC metric is one of the most popular metrics to quantify the model fit (note this is not necessarily the same as the model quality, just as correlation does not equal causation). The AUC metric indicates, very simply put, the number of correct and incorrect predictions and plots them in a graph. The area under that graph then indicates the explanatory power of the model

DATA

The first step covers the selection of data from an extensive database containing the financial information and default history of millions of companies. Not all data points can be used in the calibration and/or during the performance testing of the model, therefore data filters are applied. Furthermore, the data set is categorized in 3 different size classes and 18 different industry sectors, each of which will be calibrated independently, using the same methodology.

This results in the master dataset, in addition data statistics are created that show the data availability, data relations and data quality. The master dataset also contains derived fields based on financials from the database, these fields are based on a long list of quantitative risk drivers (financial ratios). The long list of risk drivers is created based on expert option. As a last step, the master dataset is split into a calibration dataset (2/3 of the master dataset) and a test dataset (1/3 of the master dataset).

RISK DRIVER SELECTION

The risk driver selection for the qualitative variables is different from the risk driver selection for the quantitative variables. The final list of quantitative risk drivers is selected by means of different statistical analyses calculated for the long list of quantitative risk drivers. For the qualitative variables, a set of variables is selected based on expert opinion and industry practices.

SCORING APPROACH

Scoring functions are calibrated for the quantitative part of the model. These scoring function translate the value and trend value of each quantitative risk driver per size and industry to a (uniform) score between 0-100. For this exercise, different possible types of scoring functions are used. The best-performing scoring function for the value and trend of each risk driver is determined by performing a regression and comparing the performance. The coefficients in the scoring functions are estimated by fitting the function to the ratio values for companies in the calibration dataset. For the qualitative variables the translation from a value to a score is based on expert opinion.

WEIGHTING APPROACH

The overall score of the quantitative part of the model is combined by summing the value and trend scores by applying weights. As a starting point expert opinion-based weights are applied, after which the performance of the model is further optimized by iteratively adjusting the weights and arriving at an optimal set of weights. The weights of the qualitative variables are based on expert opinion.

MAPPING TO CENTRAL TENDENCY

To estimate the mapping from final scores to a rating class, a standardized methodology is created. The buckets are constructed from a scoring distribution perspective. This is done to ensure the eventual smooth distribution over the rating classes. As an input, the final scores (based on the quantitative risk drivers only) of each company in the calibration dataset is used together with expert opinion input parameters. The estimation is performed per size class. An optimization is performed towards a central tendency by adjusting the expert opinion input parameters. This is done by deriving a target average PD range per size class and on total level based on default data from the European Banking Authority (EBA).

The qualitative variables are included by performing an external benchmark on a selected set of companies, where proxies are used to derive the score on the qualitative variables.

The final input parameters for the mapping are set such that the average PD per size class from the Corporate Rating Model is in line with the target average PD ranges. And, a good performance on the external benchmark is achieved.

OVERRIDE FRAMEWORK

The override framework consists of two sections, Level A and Level B. Level A takes country, industry and company-specific risks into account. Level B considers the possibility of guarantor support and other (final) overriding factors. By applying Level A overrides, the Interim Credit Risk Rating (CRR) is obtained. By applying Level B overrides, the Final CRR is obtained. For the calibration only the country risk is taken into account, as this is the only override that is based on data and not a user input. The country risk is set based on OECD country risk classifications.

TESTING AND BENCHMARKING

For the testing and benchmarking the performance of the model is analysed based on the calibration and test dataset (excluding the qualitative assessment but including the country risk adjustment). For each dataset the discriminatory power is determined by looking at the AUC. The calibration quality is reviewed by performing a Binomial Test on Individual Rating Classes to check if the observed default rate lies within the boundaries of the PD rating class and a Traffic Lights Approach to compare the observed default rates with the PD of the rating class.

Concluding, the methodology applied for the (re-)calibration of the Corporate Rating Model is based on an extensive dataset with financial and default information and complemented with expert opinion. The methodology ensures that the final model performs in-line with the central tendency and an performs well on an external benchmark.

A comprehensive guide to Credit Rating Modelling

Credit rating agencies and the credit ratings they publish have been the subject of a lot of debate over the years. While they provide valuable insight in the creditworthiness of companies, they have been criticized for assigning high ratings to package sub-prime mortgages, for not being representative when a sudden crisis hits and the effect they have on creating ‘self fulfilling prophecies’ in times of economic downturn.

For all the criticism that rating models and credit rating agencies have had through the years, they are still the most pragmatic and realistic approach for assessing default risk for your counterparties. Of course, the quality of the assessment depends to a large extent on the quality of the model used to determine the credit rating, capturing both the quantitative and qualitative factors determining counterparty credit risk. A sound credit rating model strikes a balance between these two aspects. Relying too much on quantitative outcomes ignores valuable ‘unstructured’ information, whereas an expert judgement based approach ignores the value of empirical data, and their explanatory power.

In this white paper we will outline some best practice approaches to assessing default risk of a company through a credit rating. We will explore the ratios that are crucial factors in the model and provide guidance for the expert judgement aspects of the model.

Zanders has applied these best practices while designing several Credit Rating models for many years. These models are already being used at over 400 companies and have been tested both in practice and against empirical data. Do you want to know more about our Credit Rating models, click here.

Credit ratings and their applications

Credit ratings are widely used throughout the financial industry, for a variety of applications. This includes the corporate finance, risk and treasury domains and beyond. While it is hardly ever a sole factor driving management decisions, the availability of a point estimation to describe something as complex as counterparty credit risk has proven a very useful piece of information for making informed decisions, without the need for a full due diligence into the books of the counterparty.

Some of the specific use cases are:

- Internal assessment of the creditworthiness of counterparties

- Transparency of the creditworthiness of counterparties

- Monitoring trends in the quality of credit portfolios

- Monitoring concentration risk

- Performance measurement

- Determination of risk-adjusted credit approval levels and frequency of credit reviews

- Formulation of credit policies, risk appetite, collateral policies, etc.

- Loan pricing based on Risk Adjusted Return on Capital (RAROC) and Economic Profit (EP)

- Arm’s length pricing of intercompany transactions, in line with OECD guidelines

- Regulatory Capital (RC) and Economic Capital (EC) calculations

- Expected Credit Loss (ECL) IFRS 9 calculations

- Active Credit Portfolio Management on both portfolio and (individual) counterparty level

Credit rating philosophy

A fundamental starting point when applying credit ratings, is the credit rating philosophy that is followed. In general, two distinct approaches are recognized:

- Through-the-Cycle (TtC) rating systems measure default risk of a counterparty by taking permanent factors, like a full economic cycle, into account based on a worst-case scenario. TtC ratings change only if there is a fundamental change in the counterparty’s situation and outlook. The models employed for the public ratings published by e.g. S&P, Fitch and Moody’s are generally more TtC focused. They tend to assign more weight to qualitative features and incorporate longer trends in the financial ratios, both of which increase stability over time.

- Point-in-Time (PiT) rating systems measure default risk of a counterparty taking current, temporary factors into account. PiT ratings tend to adjust quickly to changes in the (financial) conditions of a counterparty and/or its economic environment. PiT models are more suited for shorter term risk assessments, like Expected Credit Losses. They are more focused on financial ratios, thereby capturing the more dynamic variables. Furthermore, they incorporate a shorter trend which adjusts faster over time. Most models incorporate a blend between the two approaches, acknowledging that both short term and long term effects may impact creditworthiness.

Rating methodology

Modelling credit ratings is very complex, due to the wide variety of events and exposures that companies are exposed to. Operational risk, liquidity risk, poor management, a perishing business model, an external negative event, failing governments and technological innovation can all have very significant influence on the creditworthiness of companies in the short and long run. Most credit rating models therefore distinguish a range of different factors that are modelled separately and then combined into a single credit rating. The exact factors will differ per rating model. The overview below presents the factors included the Corporate Rating Model, which is used in some of the cloud-based solutions of the Zanders applications.

The remainder of this article will detail the different factors, explaining the rationale behind including them.

Quantitative factors

Quantitative risk factors are crucial to credit rating models, as they are ‘objective’ and therefore generate a large degree of comparability between different companies. Their objective nature also makes them easier to incorporate in a model on a large scale. While financials alone do not tell the whole story about a company, accounting standards have developed over time to provide a more and more comparable view of the financial state of a company, making them a more and more thrustworthy source for determining creditworthiness. To better enable comparisons of companies with different sizes, financials are often represented as ratios.

Financial Ratios

Financial ratios are being used for credit risk analyses throughout the financial industry and present the basic characteristics of companies. A number of these ratios represent (directly or indirectly) creditworthiness. Zanders’ Corporate Credit Rating model uses the most common of these financial ratios, which can be categorised in five pillars:

Pillar 1 - Operations

The Operations pillar consists of variables that consider the profitability and ability of a company to influence its profitability. Earnings power is a main determinant of the success or failure of a company. It measures the ability of a company to create economic value and the ability to give risk protection to its creditors. Recurrent profitability is a main line of defense against debtor-, market-, operational- and business risk losses.

Turnover Growth

Turnover growth is defined as the annual percentage change in Turnover, expressed as a percentage. It indicates the growth rate of a company. Both very low and very high values tend to indicate low credit quality. For low turnover growth this is clear. High turnover growth can be an indication for a risky business strategy or a start-up company with a business model that has not been tested over time.

Gross Margin

Gross margin is defined as Gross profit divided by Turnover, expressed as a percentage. The gross margin indicates the profitability of a company. It measures how much a company earns, taking into consideration the costs that it incurs for producing its products and/or services. A higher Gross margin implies a lower default probability.

Operating Margin

Operating margin is defined as Earnings before Interest and Taxes (EBIT) divided by Turnover, expressed as a percentage. This ratio indicates the profitability of the company. Operating margin is a measurement of what proportion of a company's revenue is left over after paying for variable costs of production such as wages, raw materials, etc. A healthy Operating margin is required for a company to be able to pay for its fixed costs, such as interest on debt. A higher Operating margin implies a lower default probability.

Return on Sales

Return on sales is defined as P/L for period (Net income) divided by Turnover, expressed as a percentage. Return on sales = P/L for period (Net income) / Turnover x 100%. Return on sales indicates how much profit, net of all expenses, is being produced per pound of sales. Return on sales is also known as net profit margin. A higher Return on sales implies a lower default probability.

Return on Capital Employed

Return on capital employed (ROCE) is defined as Earnings before Interest and Taxes (EBIT) divided by Total assets minus Current liabilities, expressed as a percentage. This ratio indicates how successful management has been in generating profits (before Financing costs) with all of the cash resources provided to them which carry a cost, i.e. equity plus debt. It is a basic measure of the overall performance, combining margins and efficiency in asset utilization. A higher ROCE implies a lower default probability.

Pillar 2 - Liquidity

The Liquidity pillar assesses the ability of a company to become liquid in the short-term. Illiquidity is almost always a direct cause of a failure, while a strong liquidity helps a company to remain sufficiently funded in times of distress. The liquidity pillar consists of variables that consider the ability of a company to convert an asset into cash quickly and without any price discount to meet its obligations.

Current Ratio

Current ratio is defined as Current assets, including Cash and Cash equivalents, divided by Current liabilities, expressed as a number. This ratio is a rough indication of a firm's ability to service its current obligations. Generally, the higher the Current ratio, the greater the cushion between current obligations and a firm's ability to pay them. A stronger ratio reflects a numerical superiority of Current assets over Current liabilities. However, the composition and quality of Current assets are a critical factor in the analysis of an individual firm's liquidity, which is why the current ratio assessment should be considered in conjunction with the overall liquidity assessment. A higher Current ratio implies a lower default probability.

Quick Ratio

The Quick ratio (also known as the Acid test ratio) is defined as Current assets, including Cash and Cash equivalents, minus Stock divided by Current liabilities, expressed as a number. The ratio indicates the degree to which a company's Current liabilities are covered by the most liquid Current assets. It is a refinement of the Current ratio and is a more conservative measure of liquidity. Generally, any value of less than 1 to 1 implies a reciprocal dependency on inventory or other current assets to liquidate short-term debt. A higher Quick ratio implies a lower default probability.

Stock Days

Stock days is defined as the average Stock during the year times the number of days in a year divided by the Cost of goods sold, expressed as a number. This ratio indicates the average length of time that units are in stock. A low ratio is a sign of good liquidity or superior merchandising. A high ratio can be a sign of poor liquidity, possible overstocking, obsolescence, or, in contrast to these negative interpretations, a planned stock build-up in the case of material shortages. A higher Stock days ratio implies a higher default probability.

Debtor Days

Debtor days is defined as the average Debtors during the year times the number of days in a year divided by Turnover. Debtor days indicates the average number of days that trade debtors are outstanding. Generally, the greater number of days outstanding, the greater the probability of delinquencies in trade debtors and the more cash resources are absorbed. If a company's debtors appear to be turning slower than the industry, further research is needed and the quality of the debtors should be examined closely. A higher Debtors days ratio implies a higher default probability.

Creditor Days

Creditor days is defined as the average Creditors during the year as a fraction of the Cost of goods sold times the number of days in a year. It indicates the average length of time the company's trade debt is outstanding. If a company's Creditors days appear to be turning more slowly than the industry, then the company may be experiencing cash shortages, disputing invoices with suppliers, enjoying extended terms, or deliberately expanding its trade credit. The ratio comparison of company to industry suggests the existence of these or other causes. A higher Creditors days ratio implies a higher default probability.

Pillar 3 - Capital Structure

The Capital pillar considers how a company is financed. Capital should be sufficient to cover expected and unexpected losses. Strong capital levels provide management with financial flexibility to take advantage of certain acquisition opportunities or allow discontinuation of business lines with associated write offs.

Gearing

Gearing is defined as Total debt divided by Tangible net worth, expressed as a percentage. It indicates the company’s reliance on (often expensive) interest bearing debt. In smaller companies, it also highlights the owners' stake in the business relative to the banks. A higher Gearing ratio implies a higher default probability.

Solvency

Solvency is defined as Tangible net worth (Shareholder funds – Intangibles) divided by Total assets – Intangibles, expressed as a percentage. It indicates the financial leverage of a company, i.e. it measures how much a company is relying on creditors to fund assets. The lower the ratio, the greater the financial risk. The amount of risk considered acceptable for a company depends on the nature of the business and the skills of its management, the liquidity of the assets and speed of the asset conversion cycle, and the stability of revenues and cash flows. A higher Solvency ratio implies a lower default probability.

Pillar 4 - Debt Service

The debt service pillar considers the capability of a company to meet its financial obligations in the form of debt. It ties the debt obligation a company has to its earnings potential.

Total Debt / EBITDA

The debt service pillar considers the capability of a company to meet its financial obligations. This ratio is defined as Total debt divided by Earnings before Interest, Taxes, Depreciation, and Amortization (EBITDA). Total debt comprises Loans + Noncurrent liabilities. It indicates the total debt run-off period by showing the number of years it would take to repay all of the company's interest-bearing debt from operating profit adjusted for Depreciation and Amortization. However, EBITDA should not, of course, be considered as cash available to pay off debt. A higher Debt service ratio implies a higher default probability.

Interest Coverage Ratio

Interest coverage ratio is defined as Earnings before interest and taxes (EBIT) divided by interest expenses (Gross and Capitalized). It indicates the firm's ability to meet interest payments from earnings. A high ratio indicates that the borrower should have little difficulty in meeting the interest obligations of loans. This ratio also serves as an indicator of a firm's ability to service current debt and its capacity for taking on additional debt. A higher Interest coverage ratio implies a lower default probability.

Pillar 5 - Size

In general, the larger a company is, the less vulnerable the company is, as there is, usually, more diversification in turnover. Turnover is considered the best indicator of size. In general, turnover is related to vulnerability. The higher the turnover, the less vulnerable a company (generally) is.

Ratio Scoring and Mapping

While these financial ratios provide some very useful information regarding the current state of a company, it is difficult to assess them on a stand-alone basis. They are only useful in a credit rating determination if we can compare them to the same ratios for a group of peers. Ratio scoring deals with the process of translating the financials to a score that gives an indication of the relative creditworthiness of a company against its peers.

The ratios are assessed against a peer group of companies. This provides more discriminatory power during the calibration process and hence a better estimation of the risk that a company will default. Research has shown that there are two factors that are most fundamental when determining a comparable peer group. These two factors are industry type and size. The financial ratios tend to behave ‘most alike’ within these segmentations. The industry type is a good way to separate, for example, companies with a lot of tangible assets on their balance sheet (e.g. retail) versus companies with very few tangible assets (e.g. service based industries). The size reflects that larger companies are generally more robust and less likely to default in the short to medium term, as compared to smaller, less mature companies.

Since ratios tend to behave differently over different industries and sizes, the ratio value score has to be calibrated for each peer group segment.

When scoring a ratio, both the latest value and the long-term trend should be taken into account. The trend reflects whether a company’s financials are improving or deteriorating over time, which may be an indication of their long-term perspective. Hence, trends are also taken into account as a separate factor in the scoring function.

To arrive to a total score, a set of weights needs to be determined, which indicates the relative importance of the different components. This total score is then mapped to a ordinal rating scale, which usually runs from AAA (excellent creditworthiness) to D (defaulted) to indicate the creditworthiness. Note that at this stage, the rating only incorporates the quantitative factors. It will serve as a starting point to include the qualitative factors and the overrides.

"A sound credit rating model strikes a balance between quantitative and qualitative aspects. Relying too much on quantitative outcomes ignores valuable ‘unstructured’ information, whereas an expert judgement based approach ignores the value of empirical data, and their explanatory power."

Qualitative Factors

Qualitative factors are crucial to include in the model. They capture the ‘softer’ criteria underlying creditworthiness. They relate, among others, to the track record, management capabilities, accounting standards and access to capital of a company. These can be hard to capture in concrete criteria, and they will differ between different credit rating models.

Note that due to their qualitative nature, these factors will rely more on expert opinion and industry insights. Furthermore, some of these factors will affect larger companies more than smaller companies and vice versa. In larger companies, management structures are far more complex, track records will tend to be more extensive and access to capital is a more prominent consideration.

All factors are generally assigned an ordinal scoring scale and relative weights, to arrive at a total score for the qualitative part of the assessment.

A categorisation can be made between business analysis and behavioural analysis.

Business Analysis

Business analysis deals with all aspects of a company that relate to the way they operate in the markets. Some of the factors that can be included in a credit rating model are the following:

Years in Same Business

Companies that have operated in the same line of business for a prolonged period of time have increased credibility of staying around for the foreseeable future. Their business model is sound enough to generate stable financials.

Customer Risk

Customer risk is an assessment to what extent a company is dependent on one or a small group of customers for their turnover. A large customer taking its business to a competitor can have a significant impact on such a company.

Accounting Risk

The companies internal accounting standards are generally a good indicator of the quality of management and internal controls. Recent or frequent incidents, delayed annual reports and a lack of detail are clear red flags.

Track record with Corporate

This is mostly relevant for counterparties with whom a standing relationship exists. The track record of previous years is useful first hand experience to take into account when assessing the creditworthiness.

Continuity of Management

A company that has been under the same management for an extended period of time tends to reflect a stable company, with few internal struggles. Furthermore, this reflects a positive assessment of management by the shareholders.

Operating Activities Area

Companies operating on a global scale are generally more diversified and therefore less affected by most political and regulatory risks. This reflects well in their credit rating. Additionally, companies that serve a large market have a solid base that provides some security against adverse events.

Access to Capital

Access to capital is a crucial element of the qualitative assessment. Companies with a good access to the capital markets can raise debt and equity as needed. An actively traded stock, a public rating and frequent and recent debt issuances are all signals that a company has access to capital.

Behavioral Analysis

Behavioural analysis aims to incorporate prior behaviour of a company in the credit rating. A separation can be made between external and internal indicators

External indicators

External indicators are all information that can be acquired from external parties, relating to the behaviour of a company where it comes to honouring obligations. This could be a credit rapport from a credit rating agency, payment details from a bank, public news items, etcetera.

Internal Indicators

Internal indicators concern all prior interactions you have had with a company. This includes payment delay, litigation, breaches of financial covenants etcetera.

Override Framework

Many models allow for an override of the credit rating resulting from the prior analysis. This is a more discretionary step, which should be properly substantiated and documented. Overrides generally only allow for adjusting the credit rating with one notch upward, while downward adjustment can be more sizable.

Overrides can be made due to a variety of reasons, which is generally carefully separated in the model. Reasons for overrides generally include adjusting for country risk, industry adjustments, company specific risk and group support.

It should be noted that some overrides are mandated by governing bodies. As an example, the OECD prescribes the overrides to be applied based on a country risk mapping table, for the purpose of arm’s length pricing of intercompany contracts.

Combining all the factors and considerations mentioned in this article, applying weights and scoring functions and applying overrides, a final credit rating results.

Model Quality and Fit

The model quality determines whether the model is appropriate to be used in a practical setting. From a statistical modelling perspective, a lot of considerations can be made with regard to model quality, which are outside of the scope of this article, so we will stick to a high level consideration here.

The AUC (area under the ROC curve) metric is one of the most popular metrics to quantify the model fit (note this is not necessarily the same as the model quality, just as correlation does not equal causation). The AUC metric indicates, very simply put, the number of correct and incorrect predictions and plots them in a graph. The area under that graph then indicates the explanatory power of the model. A more extensive guide to the AUC metric can be found here.

Alternative Modelling Approaches

The model structure described above is one specific way to model credit ratings. While models may widely vary, most of these components would typically be included. During recent years, there has been an increase in the use of payment data, which is disclosed through the PSD2 regulation. This can provide a more up-to-date overview of the state of the company and can definitely be considered as an additional factor in the analysis. However, the main disadvantage of this approach is that it requires explicit approval from the counterparty to use the data, which makes it more challenging to apply on a portfolio basis.

Another approach is a purely machine learning based modelling approach. If applied well, this will give the best model in terms of the AUC (area under the curve) metric, which measures the explanatory power of the model. One major disadvantage of this approach, however, is that the interpretability of the resulting model is very limited. This is something that is generally not preferred by auditors and regulatory bodies as the primary model for creditworthiness. In practice, we see these models most often as challenger models, to benchmark the explanatory power of models based on economic rationale. They can serve to spot deficiencies in the explanatory power of existing models and trigger a re-assessment of the factors included in these models. In some cases, they may also be used to create additional comfort regarding the inclusion of some factors.

Furthermore, the degree to which the model depends on expert opinion is to a large extent dependent on the data available to the model developer. Most notably, the financials and historical default data of a representative group of companies is needed to properly fit the model to the empirical data. Since this data can be hard to come by, many credit rating models are based more on expert opinion than actual quantitative data. Our Corporate Credit Rating model was calibrated on a database containing the financials and default data of an extensive set of companies. This provides a solid quantitative basis for the model outcomes.

Closing Remarks

Model credit risk and credit ratings is a complex affair. Zanders provides advice, standardized and customizable models and software solutions to tackle these challenges. Do you want to learn more about credit rating modelling? Reach out for a free consultation. Looking for a tailor made and flexible solution to become IFRS 9 compliant, find out about our Condor Credit Risk Suite, the IFRS9 compliance solution.