As regulators raise the bar on climate risk management, banks are now facing a new and complex expectation: climate reverse stress testing.

We’ve spoken to many banks recently, and the message is clear: developing and implementing a climate reverse stress testing (RST) framework will be a significant challenge, particularly as it is a completely new regulatory expectation for climate risk from the PRA. Most banks are already familiar with RST in the context of credit, market, and liquidity risks. But extending this practice to climate introduces a different level of complexity.

Until now, climate quantitative analysis has centered on standard stress testing and scenario analysis, asking what could happen under different climate pathways. Reverse stress testing flips the question: what climate pathway could push your business model to the point of failure?

You may have already fallen into the trap of believing these common myths; if so, it’s time to rethink your position:

- “Our institution is not exposed to climate risk.” The PRA is unlikely to accept this - climate risk is systemic. It manifests as transition risk (such as carbon taxes or shifts in consumer expectations) and physical risk (both acute climate events and chronic changes). These drivers affect almost every portfolio: credit exposures like commercial loans and mortgages, market positions in bonds and equities, and even operational resilience. Hence, it is highly likely that your institution is already exposed to climate risk factors, directly or indirectly.

- “Rain alone could never cause us to fail.” This could be true if it weren’t for the fact that risks don’t occur in isolation. Rainfall, carbon prices, GDP slowdown, and interest rate rises can interact in complex and non-linear ways to push an institution towards failure. Although a single risk factor might need to reach an extreme tail event to cause failure, multiple risk factors acting together don’t need to be extreme to push a firm to the brink. Plausible domino effects can occur - for example, in a mortgage portfolio, heavier rainfall increases flood risk, lowering property values and weakening collateral. At the same time, a rise in carbon prices lifts household energy bills, cutting disposable income and pushing up default probabilities. Higher PDs, combined with weaker collateral driving LGDs higher, can accelerate capital erosion towards the failure point.

- “The failure points are so extreme, there’s no benefit in analyzing them.” A failure point doesn’t have to mean the bank has collapsed entirely. In practice, it could be something completely plausible, such as the CET1 falling below 11% or liquidity buffers dropping under regulatory requirements. These are thresholds that banks already monitor as part of business-as-usual.

- “What’s the benefit of RST? We already run standard stress tests.” RST forces firms to confront and explore extreme, and yet plausible, critical scenarios they might otherwise avoid. It can uncover vulnerabilities that remain hidden in conventional stress testing.

We recommend that you should prepare for the following key challenges:

- Defining failure points: Deciding exactly what a failure would look like is not straightforward and is the first challenge. Most firms will base the breaking point on a regulatory capital measure such as CET1. From there, they need to identify the internal drivers (PD, LGD, credit spreads, liquidity buffers etc) that would cause it to erode.

- Deriving transmission channels: The next critical step is mapping which climate variables (such as carbon price, rainfall, and temperature shocks) could realistically impact those internal drivers. For example, in mortgage portfolios, heavier rainfall could reduce property values and raise insurance costs, leading not only to higher LGDs but also higher PDs.

- Developing supporting models: In many cases, deriving the relationships between the different drivers requires additional supporting models. For example, firms may need to develop models to measure and assess the relationship between rainfall and LGD/PD.

- Quantification of the narrative: Over time, the PRA is likely to require qualitative insights to evolve into quantified relationships between climate drivers, bank risk factors, and failure points. It’s not just about establishing a link between rainfall or carbon prices and LGD/PD, but defining potential levels of the risk factors that could push the bank to failure.

- Embedding outcomes: RST results need to feed into firm-wide processes and systems, including governance, reporting, and ongoing monitoring. At this stage, RST stops being just a regulatory expectation and becomes a proactive tool for managing risk.

At Zanders, we can support you in developing climate RST frameworks that are:

- Proportionate: from plausible qualitative narratives to quantification models, aligned to your portfolio exposure to climate risk.

- Scalable: solutions that evolve along with your firm’s climate risk journey.

- Strategic: we guide you through achieving regulatory compliance while always keeping an eye on your long-term business objectives.

How far are you with planning and self-assessment for climate RST at your firm? Our advice: don’t wait until the updated Supervisory Statement is published by the PRA to planning (or start putting in place a plan for) for a climate RST framework. Starting early will make the process smoother and ensure you are well-positioned and prepared when regulatory scrutiny will inevitably materialize.

We would be delighted to share our insights and discuss how we can support your climate risk journey. Please reach out to the Zanders UK climate risk modeling team (Polly Wong, Nikolas Kontogiannis, Hardial Kalsi, Paolo Vareschi).

Japan is leading ISO 20022 Implementation with its November 2025 deadline, read our steps for corporates worldwide to start preparing.

The International Organization for Standardization (ISO) sets global standards to improve quality, safety, and efficiency across industries. ISO 20022, in particular, is transforming financial communications. It provides a universal framework for structured, data-rich messages, improving interoperability between institutions and enabling innovation in payments, securities, and foreign exchange.

Globally, ISO 20022 is replacing older messaging standards, such as SWIFT MT messages, with XML-based messaging. While the worldwide adoption deadline has been extended to November 2026 , Japan is sticking to its original November 2025 timeline—especially for cross-border payments.

Japan’s Transition: Zengin and Cross-Border Payments

Japan’s domestic payment system, Zengin, handles interbank transfers. While the domestic Zengin format remains unchanged, the cross-border Zengin format is being phased out. Japanese banks now require cross-border payments to follow the ISO 20022 XML standard, specifically the “pain.001” message type.

This shift affects corporates as well. Banks are encouraging clients to submit payments in XML format rather than converting older MT101 or cross-border Zengin messages which means Treasury Management Systems (TMS) and ERP systems may need updates.

Opportunities and Challenges for Corporates

ISO 20022 requires structured data, particularly for beneficiary addresses. While Treasury payments to a limited number of counterparties may be manageable, handling tens of thousands of global vendors is far more complex. Many corporates face inconsistent or outdated vendor data, which may not meet ISO standards.

Tools for master data cleanup, like those which can proposed by Zanders, will automate validation and ensure compliance, helping corporates navigate this transition efficiently.

Taking Action Now

Even though Japan is leading with its November 2025 deadline, corporates worldwide are encouraged to start preparing. Steps include:

- Assessing current processes and systems

- Updating ERP and TMS inputs for hybrid or fully structured address formats

- Leveraging master data validation tools

Conclusion

Japan’s commitment to ISO 20022 is a pivotal moment for cross-border financial transactions. Corporates must act quickly, adopting structured data practices to ensure compliance and maintain operational efficiency. With the right tools and preparation, businesses can turn this regulatory shift into an opportunity to standardize and modernize their financial messaging. Zanders can provide support to companies, offering high-end solutions and expert guidance to navigate the complexities of ISO 20022 adoption.

The ECB’s revised guide to internal models introduces stricter standards for credit risk, reshaping how banks approach PD, LGD and CCF calibration.

On July 28th, the European Central Bank (ECB) published its revised guide to internal models ECB publishes revised guide to internal models. On top of changes necessary for alignments with CRR3, the ECB improves their guidelines based on supervisory experience with the aim of harmonized and transparent internal modeling practices at credit institutions.

In this article, we share our perspective on the changes in the credit risk chapter, focusing on the impact on PD, LGD and CCF modeling:

1- PD: Institutions must use at least five years of default data and demonstrate a meaningful correlation between default rates and macroeconomic indicators for LRA DR calibration.

2- LGD: The ECB will benchmark calibration windows against the 2008–2018 period. Downturn LGD calibration must cover all relevant components and include yearly elevated LGDs, even if they don’t perfectly match downturn periods. The LGD reference value is now an active challenge in model validation, requiring consistent calculation and action if weaknesses appear.

3- CCF: For CCF modeling, risk drivers must use data exactly 12 months before default, 0% CCFs for non-retail exposures are no longer allowed, and negative CCFs must be floored at zero, while high CCFs remain uncapped.

The following chapters elaborate on these three proposed amendments in more detail.

1 PD

1.1 Minimum requirements historical data

The ECB now specifies data requirements for the representativeness analysis referenced in paragraphs 82 and 83 of the EBA Guidelines on PD and LGD estimation (EBA/GL/2017/16), which assess whether the PD calibration dataset reflects a balanced mix of "good" and "bad" years.

According to paragraph 236(A) of the revised EGIM, institutions must use a minimum of 5 years of historical data as of the calibration date. Additionally, they must have enough one-year default rates to calculate a statistically meaningful correlation between default rates and relevant (macro)economic indicators.

If an institution lacks the required data (either the 5 years or enough data for meaningful correlation), paragraph 236(B) states the period is automatically deemed not representative, and the institution must apply adjustments and quantify a Margin of Conservatism (MoC). However, paragraph 236(C) allows that if an institution has enough data but cannot find significant correlations with macroeconomic indicators, the historical period may still be considered representative if it includes both the minimum and maximum of the institution’s internal one-year default rates.

1.2 LRA DR reference value

The ECB introduces a reference calibration level for LRA DR in paragraph 237, based on the period January 2008 to December 2018, which is considered to be representative of a full economic cycle. Institutions must calculate this reference LRA DR and use it as an anchor value. If an institution proposes a lower LRA DR based on a different time period, it must justify why that period is more representative. Although not a strict floor, the reference LRA DR serves as an anchor value for ECB assessment. Even without sufficient default data, institutions must estimate the reference LRA DR using the accounting definition of default (DoD) as an approximation for the prudential definition.

2 LGD

For the loss given default (LGD) risk parameter, the ECB now sets out clearer expectations on two areas: the estimation of downturn LGD based on observed impact and the calculation and use of the LGD reference value.

2.1 Calibration of downturn LGD based on observed impact

The revised EGIM retains flexibility in how downturn LGD is calibrated but introduces stricter requirements for the analyses underpinning the calibration based on observed impact. Zanders expects that most institutions already conduct these analyses and may only need to refine their application.

The ECB emphasizes that all analyses1 required under paragraph 27(a) of the EBA guidelines must be conducted. If calibration is done at the component level (e.g., secured vs. unsecured), these analyses should be done separately for each component, with results aggregated into a total downturn LGD. At minimum, the most impactful component must be included; others may be needed if it doesn't capture downturn effects sufficiently.

Regardless of calibration method, elevated realized LGDs (e.g. defined as the average of defaults in a given year) must be used.. Although downturns are often defined more granularly, the ECB states that yearly averages should still be used, even if they don’t align exactly with downturn periods.

2.2 Reference value

The LGD reference value, unlike the new LRA DR, is an existing non-binding benchmark from the EBA guidelines meant to challenge an institution’s downturn LGD estimates. While still non-binding, the ECB has raised expectations for how it should be calculated and used. Zanders supports this, seeing it as a useful tool for diagnosing weaknesses in LGD calibration.

The reference value should follow paragraph 37 of the EBA guidelines2, typically calculated as the average LGD in the two years with the highest economic loss. The ECB emphasizes that incomplete recovery processes must be included in identifying these years and in the LGD calculations, and that this should be done at least at the calibration-segment level.

For comparing the reference value with actual downturn LGD estimates (per paragraph 19), the ECB offers guidance when the reference value is higher. If this isn’t due to a missed downturn period, institutions must reassess their calibration methodology for possible flaws. If a missed downturn may be the reason, they are expected to re-evaluate their downturn identification and consider timing lags between downturn events and losses.

3. CCF

The main changes to the Credit Conversion Factor (CCF) guidelines focus on calibration and aim to reduce variability across institutions' modeling practices. The ECB is aligning with the EBA’s goal of lowering RWA variability: EBA’s Revised Definition of Default - Zanders.

Key updates include:

- AIRB Approach: Institutions can now only use risk driver data from exactly 12 months before default (the reference date), eliminating the option to consider longer-term behavioral patterns.

- FIRB Approach: The ECB has removed the option for institutions to justify using a 0% CCF for non-retail exposures through an annual materiality analysis. As a result, institutions not using their own CCF models must now apply the standardised (SA) CCFs under Article 168(8a).

- Negative CCFs: In line with CRR3 (effective January 2025), negative observed CCFs must be floored at zero. However, very high CCFs (over 100%) are not capped, and the ECB provides no specific guidance on handling such outliers. Zanders recommends isolating these into separate grades or pools and reviewing the data in detail to correct any structural issues.

Conclusion

This post highlights the most relevant changes in the ECB guide to internal models for IRB credit risk modeling. Institutions must use at least five years of default data and demonstrate a meaningful correlation between default rates and macroeconomic indicators for LRA DR calibration. The ECB will benchmark calibration windows against the 2008–2018 period. Downturn LGD calibration must cover all relevant components and include yearly elevated LGDs, even if they don’t perfectly match downturn periods. The LGD reference value is now an active challenge in model validation, requiring consistent calculation and action if weaknesses appear. For CCF modeling, risk drivers must use data exactly 12 months before default, 0% CCFs for non-retail exposures are no longer allowed, and negative CCFs must be floored at zero, while high CCFs remain uncapped.

Reach out to our experts John de Kroon and Dick de Heus, if you are interested in getting a better understanding of what the proposed amendments mean for your credit risk portfolio.

Zanders actively monitors regulatory updates relevant for (credit) risk modeling. Keep a close eye on our LinkedIn and website for more information or subscribe to our newsletters here.

With IFRS 18 introducing fundamental changes to FX reporting, treasuries must act now to prepare for the 2027 compliance deadline.

IFRS 18 introduces significant changes to FX classification and reporting requirements by January 2027. Despite that this adoption date still feels quite far away, there is quite some time required in order to be compliant. Treasury Management Systems and ERP platforms must be updated to ensure compliance with new operating, investing, and financing categorizations. Introduced by the International Accounting Standards Board (IASB) in April 2024, IFRS 18 is required to be implemented by January 2027 at the latest. The new standard addresses how companies classify foreign exchange (FX) gains and losses, particularly affecting treasury operations.

In the past, for simplicity and pragmatic reasons, many organizations reported all FX results as part of operating income. Under IFRS 18 however, guidance on the treatment of these FX results is more explicit and must now be categorized into three groups: operating, investing, and financing dependent on the nature of the underlying exposure.

While this is a simple requirement conceptually, certain challenges may exist in creating a holistic transparent view on the FX impacts, particularly when considering the treatment of FX derivatives. This shift means that businesses must reassess their accounting practices and treasury and hedging strategies to ensure compliance.

Key Changes Under IFRS 18:The primary change in IFRS 18 is the requirement to classify FX gains and losses based on their source:

- Operating: FX results from accounts payable (AP) and accounts receivable (AR) transactions fall into this category.

- Investing: FX fluctuations linked to investments are recorded here.

- Financing: FX changes related to loans and borrowings belong in this section.

Key Date: Full implementation required by January 2027

The P&L impact from FX derivatives should also be considered in these changes, where the selection of P&L category is determined based on the nature of the exposure. IFRS 18 does allow for the P&L classification from FX derivatives to be entirely posted as Operating in the case where it is not practical to uniquely identify the nature of the underlying exposure.

This may be a common occurrence, specifically in the example of Balance Sheet FX hedging, where it is not common to hedge the individual elements of the balance sheet separately. While posting to Operating for derivatives is easier to achieve, it would create inconsistencies in categorization between the FX result from hedging, and the FX result from source.

The goal of IFRS 18 is to create clearer and more comparable financial statements across different businesses, therefore the treatment of FX results from hedging activities should be carefully considered.

Treasury’s Role in the Transition

The treasury department will play a crucial role in implementing IFRS 18. While the new classification rules are straightforward, their practical application requires an in-depth review of the drivers of FX exposure and the applied hedging strategies. Determining which department takes primary responsibility for IFRS 18 implementation can be challenging. The cross-functional nature of the project requires clear ownership and accountability structures to ensure successful implementation. This coordination challenge makes a strong case for external advisory support to facilitate collaboration between treasury, finance, accounting, and IT teams.

One major challenge of IFRS 18 is the potential mismatch between FX hedging strategies and accounting classifications. Traditionally, companies have managed FX risk through balance sheet hedging, using a single FX deal to cover multiple exposures. However, with the new classification rules, companies may need to adjust their hedging approach to ensure that hedge results align with the appropriate classification.

For example, if a company hedges a foreign currency loan, and the loan’s FX impact is now categorized under financing, the FX gain or loss from the hedge should also be classified under financing. If it remains under operating income, the company may see artificial volatility in financial statements, which could misrepresent its risk management effectiveness.

Operational and Systemic Adjustments

Beyond policy updates, IFRS 18 requires changes to Treasury Management Systems (TMS) and Enterprise Resource Planning (ERP) systems. These systems must be configured to ensure that FX transactions are correctly classified into operating, investing, or financing categories. This may involve adding new data fields, updating existing reporting structures, or even implementing new hedging processes.

Challenges and Considerations:

Companies may face several key challenges in implementing IFRS 18:

- Instance Structure Differences: Companies must determine how to apply classification rules across different subsidiaries and business units. Classification of operating for a finance company like the Treasury center may differ from that of a regular business operation.

- Chart of Accounts Adjustments: Treasury teams must assess whether existing FX hedging strategies need to be revised.

- System Updates: IT teams must modify TMS and ERP systems to support the new classification structure.

- Cross-Department Coordination: Treasury, finance, and accounting teams must work together to ensure a smooth transition.

How Zanders Can Help

Zanders, a leading treasury advisory firm, offers support to companies transitioning to IFRS 18. Our expertise extends beyond compliance, helping organizations develop effective hedging policies, update financial systems, and align their reporting strategies. Our services include:

- Reviewing FX exposure and hedging strategies.

- Identifying and resolving classification challenges.

- Developing a step-by-step plan for IFRS 18 compliance.

- Assisting with system updates and configuration changes in TMS and ERP platforms.

By addressing IFRS 18 proactively, treasury teams can not only comply with the new standard but also enhance their overall risk management approach. Zanders is committed to helping organizations navigate these changes efficiently.

Conclusion

IFRS 18 represents a significant shift in how FX gains and losses are reported and viewed through accounting principles and hedging strategies. While the standard itself is not overly complex, its impact on hedging and financial reporting requires careful planning, BI preparation, and compliance validation.

With the compliance deadline approaching in January 2027, now is the time to act. Zanders is ready to assist companies in this transition, providing both strategic guidance and practical implementation support to ensure a seamless adaptation to IFRS 18.

To find out more about IFRS 18 and key changes for treasury, please contact Jonathan Tomlinson or Mitchell Ponder.

On August 12, 2025, the European Banking Authority (EBA) released its ‘Report on the Use of AML/CFT SupTech Tools’, offering a clear view of how technology is reshaping financial supervision across Europe.

Building on the June 2024 launch of the new EU AML/CFT framework and the creation of the Anti-Money Laundering Authority (AMLA), SupTech (short for Supervisory Technology) now stands as a key driver of more efficient, data-driven, and collaborative supervision.

To inform the report, the EBA surveyed national authorities and worked with the European Commission’s AMLA Task Force to identify trends, challenges, and best practices. In this blog post, we highlight key insights and explore their impact on the financial sector.

Key Insights from the Report

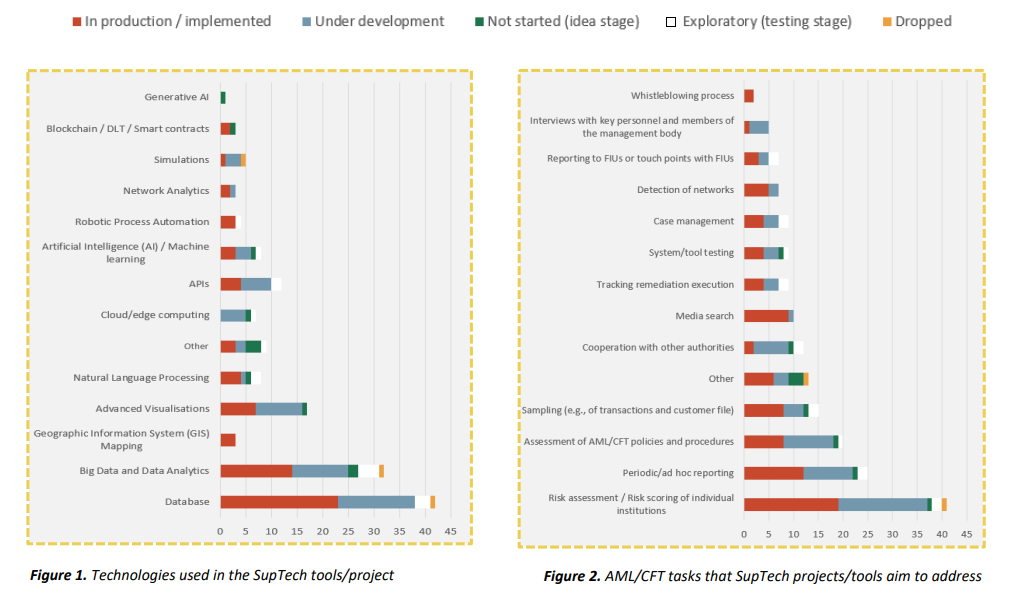

Across the EU, 31 competent authorities reported working on 60 SupTech projects or tools, most of which launched in the last three years. Nearly half are already in production, with others are in development or left as an idea for implementation. The figures below demonstrate the technologies used in SupTech tools, along with the AML/CFT tasks they aim to address.

It’s evident from Figure 1 that current efforts focus primarily on improving data quality and scalability, essential foundations for effective SupTech. More advanced technologies like Generative AI, Blockchain, and network analytics are still in early stages but are expected to play a larger role in the future.

On the task side, presented in Figure 2, most tools are geared toward risk assessment, which appears to be the most straightforward application of SupTech. As the technology matures, other areas of AML/CFT supervision may benefit from more advanced capabilities as well.

Advantages and challenges

The EBA’s survey revealed several benefits from current SupTech initiatives, with most projects targeting improvements in data quality, analytics, adaptability, automation, and collaboration through standardization. SupTech enables supervisors to operate more efficiently, respond faster to emerging risks, and make better-informed decisions in a complex financial landscape.

However, fully embracing a data-driven approach comes with challenges. SupTech tools rely heavily on robust IT infrastructure, skilled personnel, and high-quality data. While these tools can help improve data quality by detecting anomalies, they still require reliable input to function effectively.

Legal risks also emerge, particularly around GDPR compliance and accountability for decisions made by opaque algorithmic models. Resistance to adoption may arise due to concerns about job displacement and trust in AI. Additionally, limited collaboration between institutions can lead to duplicated efforts and inefficiencies. Fortunately, the new AML/CFT framework offers a foundation for improved cooperation and information sharing across borders.

How can banks prepare for a successful transition?

Although the EBA’s report is aimed at supervisory authorities, it has important consequences for banks, payment providers, and other obliged entities. SupTech will help supervisors operate more efficiently and gain deeper insights, but it will also raise expectations for the institutions they oversee. Banks should prepare for increased data requirements, more rigorous scrutiny, and pressure to standardize and respond quickly to regulatory changes. While these requirements may pose short-term challenges, they will ultimately support better compliance, risk management, and operational resilience in the long run. In order to get there, Zanders supports institutions in key areas:

- Increased data demands: AI-driven tools allow supervisors to process and analyze more data, requiring institutions to provide cleaner, more structured datasets.

- Increased detail orientation: SupTech tools detect anomalies and patterns faster, meaning institutions must ensure accuracy and consistency in their reporting.

- Standardisation: EU-wide platforms and data-sharing standards will require institutions to align systems and formats for seamless supervision.

- Change management: For SupTech to be successfully implemented, organizations must actively build a digital-first culture and encourage staff to move away from existing processes and mindsets.

- Rapid adaptation: As technology evolves, supervisors will expect institutions to keep pace. Falling behind could lead to compliance gaps.

These challenges require strategic attention and tailored support.

Are you interested in how Zanders can guide your organization through this transition? Reach out to our Partner Sebastian Marban.

Assessing bank readiness for DORA compliance: the key insights from a comprehensive survey

As the European Union increasingly emphasizes robust digital resilience within the financial sector as of January 17th 2025, the Digital Operational Resilience Act (DORA) has become a critical benchmark for compliance. A recent survey conducted with 23 banks reveals insightful data on their preparedness across various DORA categories. This blog dives into the findings and assess how well banks are positioned in meeting these regulatory standards.

General Requirements: Solid Foundations, Communication Gaps

The survey indicates strong compliance with foundational DORA requirements. Almost all banks have designated management functions for digital operational resilience and documented strategies. However, notable gaps exist in communicating these strategies effectively—as highlighted by the sizable number of banks without comprehensive stakeholder communication plans (12 “yes” vs. 11 “no” responses). Additionally, less than half the respondents have formal ICT risk appetite statements approved by senior management, leaving potential gaps in aligning risk management with organizational tolerance levels.

ICT Risk Management: Comprehensive Yet Evolving

Banks demonstrate proficiency in risk management frameworks with most having formal processes for risk identification and documentation. However, only about half systematically manage emerging and innovative technology risks—a critical aspect in today's evolving digital landscape. Equally concerning is the relative lack of focus on interconnectedness and concentration risks, with only 12 banks integrating these considerations into their risk assessments.

ICT Resilience Testing: Gap Between Basic and Advanced Practices

While regular ICT resilience testing is generally practiced, the adoption of advanced testing methodologies, such as threat-led penetration testing, is limited among the institutes that are required to perform these tests. Variability also exists in the processes for escalating issues and validating results, signifying areas requiring further attention.

ICT Third-Party Risk Management: Variable Partnerships Management

The survey reveals that while vigilance exists in maintaining third-party risk management frameworks, there are significant concerns regarding the strength of contractual safeguards and incident management processes. Less than half the banks have robust exit strategies or cater to geopolitical risks—a critical oversight in managing potential external disruptions.

Incident Reporting: Strong Foundations with Room for Procedural Enhancement

The incident reporting results indicate well-established bases in documentation and reporting processes. However, training in incident reporting procedures remains less uniform, which could impact consistency in handling real incidents.

Business Continuity and Disaster Recovery: Recurring Gaps in Comprehensive Coverage

While the majority of banks report having BCDRPs in place, only 16 ensure comprehensive coverage of all critical business functions. Testing and updating these plans is similarly underwhelming, staying mostly stagnant, which could hinder timely recovery efforts in case of an outage.

IT-Security: Solid Security Postures with Continuous Improvement Needed

Encouragingly, all respondents have documented ICT security policies, and most banks have appropriate security controls in place. While programs for regular updates in policies and controls are broadly adhered to, continuous improvement through employee training and periodic evaluations of security measures remains essential.

Beyond the Checklist: Embedding True Resilience into Operations

This survey highlights that while the foundations for DORA compliance are well-established within the banking sector, several areas still require strategic enhancements. Bridging communication gaps, enhancing advanced testing, improving third-party engagements, and boosting procedural training will be key to transitioning from foundational compliance to comprehensive resilience.

These study insights serve to underscore not only the importance of regulatory adherence but also the critical need for continuous evaluation and proactive adaptation of digital resilience strategies amidst ever-evolving digital challenges. As banks continue this journey, the collective focus should remain on creating a more adaptive, secure, and resilient digital future.

To find out more about DORA compliance and meeting regulatory standards, please contact our partner Martin Ruf.

With increasing regulatory expectations and evolving market dynamics, a well-structured ALM framework is essential for effective banking book risk management.

Managing banking book risk remains a critical challenge in today’s financial markets and regulatory environment. There are many strategic decisions to be made and banks are having trouble applying homogeneous hedging approaches across their balance sheet. As shown in the EBA’s IRRBB implementation heatmap of last February, hedging strategies and NMD modeling practices still vary significantly between banks. In addition, the EBA expects future developments on CSRBB and DRM. Meanwhile, behavioral risks and rapidly changing interest regimes need to be addressed, while balancing the stability of net interest income and economic value.

Treasury departments are at the heart of managing the banking book, with their ‘ALM framework’ serving as the essential blueprint for banking book management. This framework ensures alignment between risk appetite and business objectives. A well-developed ALM framework provides better insights and enhances understanding of the balance between risk and performance.

But, what are the characteristics of a mature ALM framework? What steps can be taken to elevate the maturity of the framework? And how can your framework unlock your full potential? This article explores the components that make up an effective ALM framework and describes what an advanced setup looks like. After inspecting ALM governance, risk frameworks, hedging strategies, ALM modeling and capital & performance management, we offer the opportunity to benchmark the maturity of your own framework against other banks and the ideal setup by filling out this survey.

Governance

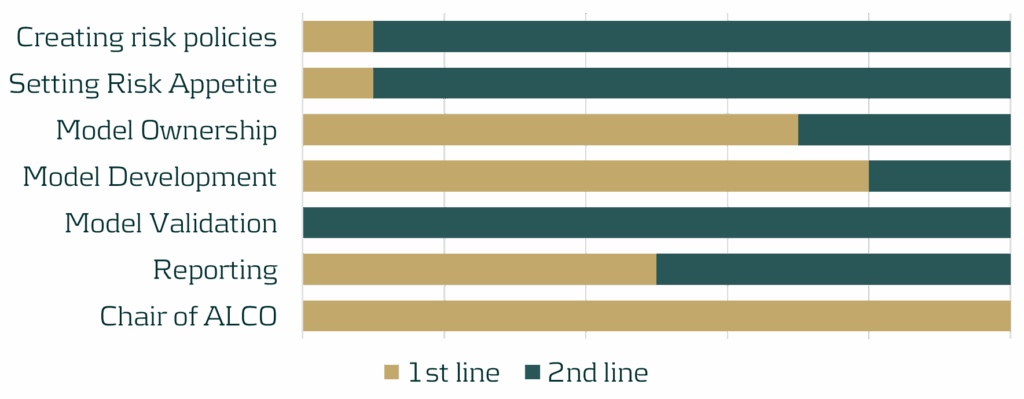

The cornerstone of any effective ALM framework is appropriate governance, much like any well-functioning business activity. Setting up strong governance begins with defining a charter with a clear scope and mandate for the departments involved. It is crucial that the first and second line of defense have accurately defined roles and pro-active knowledge sharing needs to be the standard. Oversight by senior management is essential across all activities within the framework and the Asset-Liability Committee (ALCO) should be composed of members from treasury, risk and the business.

Figure 1: Distribution of roles and responsibilities of the first and second line, based on a survey performed by Zanders.

Another critical element of ALM governance is the ALM strategy and the associated policies. The ALM strategy covers how risk and return are balanced, what interest rate position is ideal and how risks are operationally hedged (granularity, frequency and instruments). Typically, banks operate most effectively when the strategy is owned by the treasury department. The strategy should integrate perspectives on interest rate risk, credit spread risk, (intraday) liquidity risk, FX risk and capital, and must be fully aligned with business objectives and overall risk appetite.

The second line should manage the translation of the strategy into comprehensive risk policies covering the same risk types and ensuring alignment with both global and local regulatory frameworks. As part of the overarching policy framework, a risk identification process must highlight emerging risk and feed into the Risk Appetite Setting (RAS). In turn, the RAS needs to define KPIs for guiding daily risk management, specifying the boundaries within which the first line can balance risk and return.

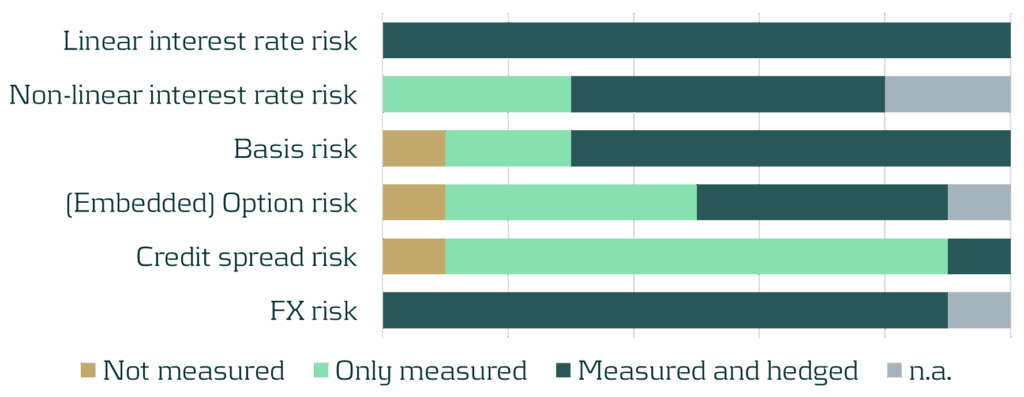

Risk Framework

Beyond sound governance, risk policies are integral to the broader risk framework. Within this framework, it is crucial to make informed decisions on measuring and hedging each individual risk type. Ideally, all risk types are managed within a central ALM system that supports risk dashboarding and stress testing.

Figure 2: Risk- scope for a selection of sub-risk types, based on a survey performed by Zanders.

In addition to identifying relevant risks and determining appropriate responses, it is essential to establish an internal operational framework for ongoing management. Centralizing and netting risks in central treasury books is fundamental to an efficient treasury function. While several approaches exist, internal transactions are typically preferred, as they enable accurate measurement of risks over different commercial and/or geographical portfolios.

The strategy for managing interest rate risk in the banking book should ultimately be reflected in a clearly defined target duration of equity. Segregating the structural position into a dedicated book facilitates precise monitoring and agile adjustments to market dynamics and regulatory changes. Market volatility may necessitate revisiting the target based on interest rate expectations, and many banks have been adjusting their target durations accordingly. The structural position is a critical strategic choice in the trade-off between earnings and value stability, and is thereby an essential factor in the hedge strategy.

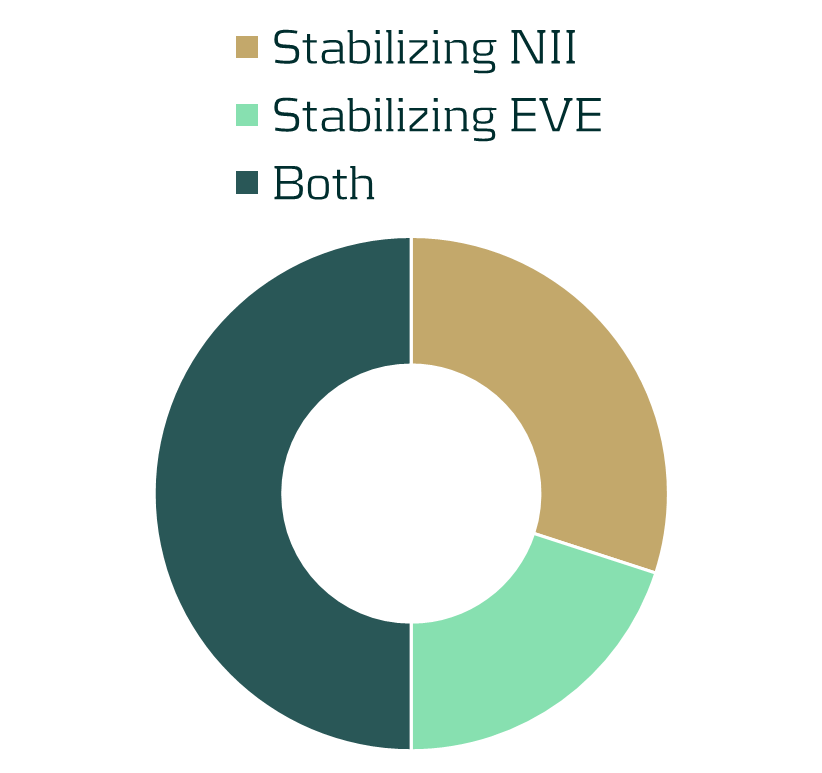

Hedge Strategy

With the risk framework, the treasury strategy, and risk appetite statement as its foundation, a strategy for hedging must be defined. This strategy guides first line processes, stating clear objectives on both earnings and value stability. Striking a balance between these two elements is challenging, but forms the basis for optimizing the balance sheet. The decision to include or exclude margins should be consistent across cashflows and discounting and should be aligned with the primary hedging focus, whether it is stabilizing earnings or value.

Figure 3: Focus of hedging strategies, based on a survey performed by Zanders.

The scope of the hedging strategy must be consistent with the risk scope outlined in the risk framework and encompass the entire balance sheet. The strategy needs to address linear risks, and also explicitly account for non-linear risks that may arise due to convexity or behavioral factors.

While commercial books typically have the objective to stabilize or increase net margins without taking an active position, hedging must be an active steering process. The treasury function should focus on optimizing the economic value of equity and net interest income within defined target limits. It is essential for the hedging process to be dynamic, using real-time analytics to proactively identify opportunities for improvement as market conditions and expectations change. Banks need to make use of scenario planning and predictive modeling to anticipate hedge requirements and adapt accordingly.

Modeling

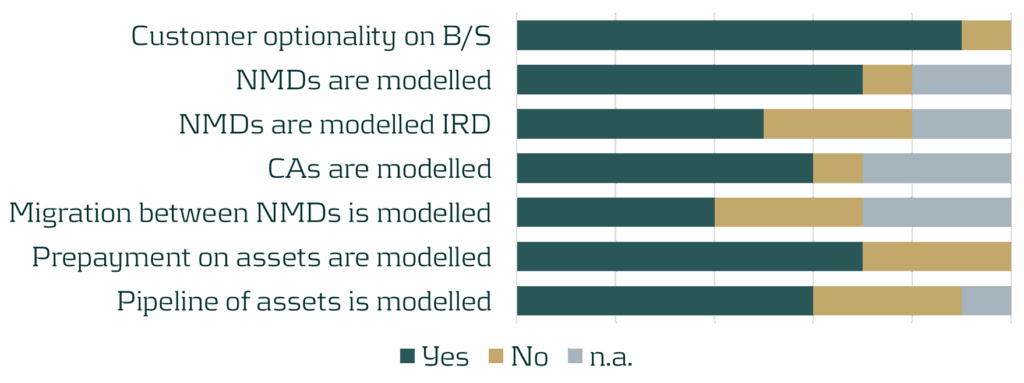

Hedging practices are based on the outcomes of a bank’s models, which should reflect reality as close as possible. A challenging yet essential aspect to modeling is addressing the optionalities inherent to many financial products. These embedded optionalities need to be modeled consistently for all assets and all liabilities. Ideally, banks have advanced interest rate-dependent behavioral models in place to model the interest rate sensitivity of deposits and loans. Pipeline risk, the migration between different deposit types and potential other behavioral characteristics of products also need to be modelled. These models provide banks with realistic insights into expected cashflows. As customer behavior can vary significantly under different market conditions, banks benefit greatly from simulating and analyzing these changes using stochastic models.

Figure 4: Type of behavioral modeling performed by banks, based on a survey performed by Zanders.

From a liquidity perspective, it is important for banks to use consistent methodologies for short and long-term cashflow forecasting. Additionally, integrating liquidity models, such as those for LCR and NSFR, with liquidity stress testing, offers valuable insights into potential future liquidity needs.

Machine learning is gaining more and more traction within the field of ALM and is becoming an integral part of ALM modeling. Using machine learning for client segmentation is increasingly more common and helps in better understanding client behavior. Several machine learning techniques for (reverse) stress testing have been developed, which improve the ability to identify vulnerabilities in balance sheets. Furthermore, predictive analytics helps to optimize balance sheet management, empowering banks to make informed strategic decisions.

Capital and Performance

Final critical elements of strategically steering a bank are the management of capital and performance measurement. Capital management is a fundamental part of modern-day banking and one of the important factors in balance sheet management. Mishandling capital requirements can significantly impact competitiveness and distort the view of risk-adjusted performance. To manage capital effectively, banks need to identify the ex-ante cost of capital for each transaction and incorporate it into pricing. Capital requirements should be allocated at the transaction level, allowing for accurate calculation of capital usage per portfolio. Ongoing capital monitoring and alignment to stress testing exercises and risk appetite is essential for optimal capital allocation and planning.

An effective Funds Transfer Pricing (FTP) framework is essential to assess risk-adjusted performance at the transaction level and to allocate overall performance across business units. In a mature FTP framework, all products are priced using an internally determined FTP curve. At a minimum, this curve needs to reflect the interest rate and liquidity risks inherent to transactions, but it can be extended to incorporate other types of risk. The FTP curve must be dynamic, adapting to portfolios and market conditions. Moreover, the FTP curve should be governed by senior management, who adjust it as needed to steer the balance sheet through (dis)incentivizing specific products or maturities.

Figure 5: Usage and granularity of FTP frameworks, based on a survey performed by Zanders.

Conclusion

The key to successfully managing banking book risks is an effective ALM framework. By leveraging your ALM framework and ensuring it aligns with the bank’s overall strategy, business objectives and complexity, you can enhance treasury’s performance and effectively manage the increased regulatory attention to IRRBB strategies.

At Zanders, we developed a model to assess the maturity level of a bank’s ALM framework. The model provides valuable insights into the maturity of the individual components and the ALM framework as a whole. This facilitates quick and straightforward benchmarking.

We invite you to complete the survey below and participate in the benchmarking exercise, which should take you less than 10 minutes. We will analyze your answers and share the (anonymized) findings with you.

ALM framework benchmarking survey

Please contact Erik Vijlbrief or Jelle Thijssen for more information.

The EBA is proposing key changes to its definition of default guidelines, with implications for credit risk practices.

On July 2nd, the European Banking Authority (EBA) published a Consultation Paper proposing amendments to its 2016 Guidelines on the application of the definition of default (DoD). As part of the consultation process, open until 15 October 2025, the credit risk specialists at Zanders will submit a formal response, leveraging our extensive experience in DoD regulation and implementation.

In this article, we share our perspective on three of the EBA’s proposed amendments, focusing on the potential impact and implementation challenges for institutions:

- We expect that a shorter probation period for forbearance measures (that only alter the repayment schedule leading to a NPV loss not greater than 5%) are expected to provide incentives for banks to opt for those types of measures rather than the most sustainable ones.

- We recommend the EBA to implement EU wide DoD guidelines they considered for payment moratoria (similar to the one for Covid), whereas the EBA proposes not to. Zanders would approve permanent moratoria guidelines, as it clarifies if governmental moratoria introduced for climate risk related natural disasters should be regarded as forbearance.

- We are oncerned that the proposal to consider material arrears on non-recourse factoring exposures up to 90 (instead of 30) DPD as technical past due situations could result in an undesired increase in the percentage of IFRS stage 1 exposures migrating directly to stage 3 (impairment).

The following chapters elaborate on these three proposed amendments in more detail.

Forbearance

The first amendments addressed in the EBA’s consultation paper (CP) are related to forbearance. The supervisory authority explains that an increase of 1% threshold for a diminished financial obligation (DFO) to 5% was considered for certain forbearance measures. This follows from the European Commission’s mandate that the update of the EBA guidelines on DoD“… shall take due account of the necessity to encourage institutions to engage in proactive, preventive and meaningful debt restructuring to support obligors.”1

In the EBA’s current DoD guidelines (DoD GL), a forbearance measure leading to a 1% or more DFO results in a default classification, which could discourage institutions from applying these measures. However, the CP purposefully proposes to exclude an increase to a 5% DFO threshold, since institutions can already implement strict(er) forbearance definitions (i.e. for concession, financial difficulty) to prevent undue default classifications. Instead, the EBA proposes to shorten the probation period from 12 to 3 months for forbearance measures that: (1) only lead to suspensions or postponements and not e.g. changes to the interest rate or exposure amounts and (2) leading to less than 5% DFO loss.

This treatment will likely incentivize institutions to choose forbearance measures in scope of the shorter probation period, rather than the ones that would be optimal for a “sustainable performing repayment status” of the obligor. The latter would be in line with the EBA’s own requirements on the management of forborne exposures (Par. 125 EBA/GL/2018/06). Furthermore, the fact that the EBA does not set the “predefined limited period of time” for the measures in scope could lead to RWA variability, as some institutions may apply the shorter probation period to longer duration forbearance measures than others. For example, if Bank A sets the limited period of time to 6 months, they can apply the shorter probation period more often compared to Bank B, which sets the period of time at 3 months. Finally, it appears as if the proposal of the banking authority aims at favouring granting forbearance measures in scope to obligors with short-term (rather than structural) financial difficulties. That is, the EBA explains that the forbearance measures in scope of the shorter probation period treatment “… would most likely be viable for obligors in temporary financial difficulties”. The shorter probation period would then lead to a return to a performing status earlier for obligors to which the forbearance measures in scope are extended, which leads to a better RWA for these obligors. Alternatively, a distinct probation period (or even higher DFO threshold) could be proposed for obligors in short-term financial difficulties, as defined in Paragraph 129(A) of the EBA’s guidelines on Management of Forborne Exposures. This would also achieve the EBA’s goal, without influencing institutions’ decision about which forbearance measure to apply.

It should be mentioned that while a large RWA impact is not anticipated from the establishment of a distinct probation period, there will likely be a significant implementation burden associated with the change. This is because, as multiple forbearance measures are usually adopted in tandem, different probation periods must be traced concurrently. The implementation of this modification would need to be retroactive, though, as credit risk models will need to be recalculated using adjusted historical data in order to account for this change. In the past, retroactively modifying the probationary period has proven to be a time-consuming and expensive problem.

Legislative payment moratoria

In light of the COVID-19 crisis, the EBA published guidelines in 2020 on handling payment moratoria introduced by governments as a means of financial aid in the context of forbearance. For certain COVID-19 measures allowing e.g. a grace period in scope of the guidelines, EBA/GL/2020/02 and amendments in .../08 and …/15, would not in itself require institutions to classify the exposures as forborne.

Even though the EBA considered introducing guidelines for potential future moratoria, the CP proposes against these changes. As one of the arguments against new moratoria guidelines, the EBA remarks that moratoria in itself will not result in DFO loss of more than 1%, hence not leading to defaults. The EBA implies that introducing new moratoria guidelines would therefore be obsolete. The EBA is also worried about RWA variability that might arise if governments declare legislative moratoria for crises in their jurisdictions. That is, the EBA expects that intra-EU comparability of RWA across institutions, might be compromised.

Adding the considered guidelines describing when moratoria should lead to forbearance in the amended DoD GL is advisable, even though the EBA proposes in the CP to remove them. Zanders challenges that guidelines describing when moratoria do not lead to forbearance would not be necessary, because the 1% DFO threshold will not be met. That is, Zanders highlights moratoria guidelines would still decide when the forborne status should be assigned to exposures if moratoria are applied. This forborne status impacts the default status later on, both for performing and defaulted exposures. The reason is that if performing forborne exposures become 30 days past due within 24 months after receiving the forborne status, a defaulted status should be assigned. If the moratoria do not lead to a forborne status, these exposures should default after becoming 90 days past due on a material amount instead. Furthermore, for defaulted exposures, it is important to understand when moratoria result in the forborne status and when they do not. That is, in order for a forborne defaulted exposure to go out of default, a substantial payment and an extended cure period are needed. Zanders would therefore be in favor of EBA guidelines that specify when moratoria should result in a forborne status and when this is not necessary.

As for the RWA variability, as self-identified by the EBA, stringent criteria could be introduced prescribing what moratoria are in scope of the amended DoD GL. As described by the EBA as well, in light of climate risk related natural disasters, payment moratoria could occur more often as a governmental means of financial aid. In contrast to ad hoc rules for each specific crisis, such as observed during the COVID-19 pandemic, Zanders contends that permanently applicable moratoria instructions in the updated DoD GL will eventually lead to a more stable RWA impact when economic or natural catastrophes occur.

Days past due for non-recourse factoring

Paragraph 23(D) of the current version of the DoD guideline stipulates that in the specific situation of non-recourse factoring for which the arrears materiality threshold is breached, but none of the receivables is more than 30 days past due (DPD), should be treated as a technical past due situation. Non-recourse factoring refers to the situation where the institution (e.g. a bank) has bought receivables from its client (e.g. service provider) owed by the debtor (e.g. service consumer). The idea behind the 30 DPD is that the DPD counter might continue to increase due to a consecutive overlap in non-payments of invoices, lengthy administrative processes, and a low degree of control of the institution over the invoices.

The CP proposes to allow for up to 90 DPD to be considered technical past due situations, in correspondence to the industry requesting the EBA to be more lenient in the DoD guidelines for non-recourse factoring. This is motivated by the fact that many corporates have at least one invoice past due more than 30 days, while being rated investment grade.

Although Zanders understands corporates’ need for more leniency, allowing for up to 90 DPD to be recognized as technical past due could make stage 2 obsolete for IFRS provisioning models. That is, if material arrears on non-recourse factoring exposures should be considered technical past due for situations up to 90 DPD, the said exposures will move from 0 DPD to 91 DPD in one day. The additional lenience would break the desired flow of exposures transitioning from IFRS stage 1 (performing), first towards stage 2 (significant increase in credit risk), before going to stage 3 (credit impaired). This stage migration effect could be mitigated by another stage 2 trigger: forbearance. However, the institution cannot apply forbearance measures to a sold invoice that is due to the institution’s client, rather than due to the institution itself. Therefore, as a stage 2 trigger, forbearance cannot compensate for the lack of the30 DPD in the particular scenario of non-recourse factoring risks.

Zanders proposes to find a balance between leniency on DoD guidelines and stage migrations, by increasing the 30 days threshold. The proposed number of days should be based on an analysis of non-recourse factoring portfolios from a representative sample of supervised institutions. This analysis should then strike a balance between the average observed days past due of invoices sold on the one hand and the representativeness of IFRS stage transitions on the other hand. Zanders is convinced that amending DoD GL based on this analysis will prevent the undesired impact on IFRS provisioning models and will better fit European corporate invoicing practice.

Conclusion

In this post we analysed 3 proposed amendments from the published Consultation Paper, in which the European Banking Authority (EBA) proposes amendments to its 2016 Guidelines on the application of the definition of default (DoD). Alternatives are suggested for all 3 proposed amendments as the proposed amendments leave room for improvements .

Reach out to our experts John de Kroon and Dick de Heus, if you are interested in getting a better understanding of what the proposed amendments mean for your credit risk portfolio.

We monitor the progress of the Consultation Paper in the future. Keep a close eye on our LinkedIn and website for more information, or subscribe to our newsletters here.

Citations

- Article 178(7) CRR as amended by Regulation (EU) 2024/1623 (CRR3). ↩︎

Discover how AI agents are transforming risk management by making advanced analytics more accessible, efficient, and intelligent.

Artificial intelligence (AI) is advancing rapidly, particularly with the emergence of large language models (LLMs) such as Generative Pre-trained Transformers (GPTs). Yet, in quantitative risk management, the perceived utility of these technologies remains relatively narrow. Most current applications focus on technical use cases, such as code autocompletion within Integrated Development Environments (IDEs), to boost productivity for developers and quantitative analysts. While valuable, these uses only hint at AI’s broader potential. Limiting AI to technically knowledgeable users overlooks opportunities to empower a wider range of stakeholders, including those without programming skills.

In this article, we explore AI agent frameworks, highlight their potential to enhance various banking functions, and share our thoughts on key design considerations when using agents in risk-sensitive environments.

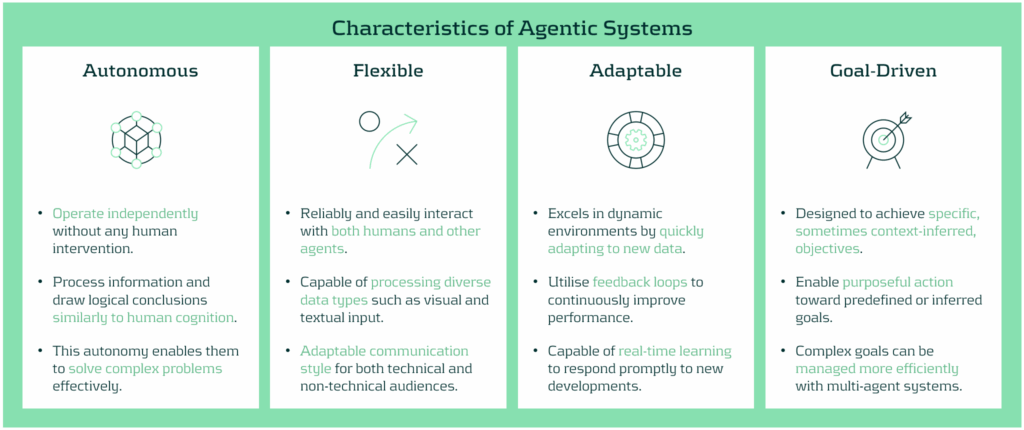

What exactly are AI agents?

While the definition of an AI agent may vary depending on the specific use case, they are generally characterised by a high degree of autonomy and a goal-oriented design. A recurring theme in the development of these systems is their ability to operate independently across entire analytical workflows. This marks a shift in how AI is utilised - transforming models from passive tools or advanced search engines into active, decision-making agents capable of driving end-to-end processes.

In the context of risk management, these workflows might include executing models, validating outputs, conducting ongoing monitoring and review, running sensitivity analyses or stress tests, and generating model performance reports. Interestingly, these actions can all be initiated by a simple trigger, often in the form of a natural language prompt - a method that has become increasingly familiar. Unlike conventional systems designed for single tasks, agents are built to reason, decide, and act across multiple steps, adapting to requirements with flexibility.

A typical agent architecture consists of five core components:

1- Interface Layer / Trigger: Translates business-level questions (e.g., “What’s the impact of a 25% increase of default probability on risk-weighted assets?”) into executable workflows, enabling non-technical users to trigger complex analyses.

2- Input and Data Processing: Preprocesses and transforms input data or outputs from other checkpoints into structured data that can be used in the agent’s decision-making process.

3- Memory & Context Manager: Maintains a record of prior steps, decisions, and user inputs to guide multi-stage processes intelligently and retain context over series of interactions.

4- Tool Integrator: Connects to and uses various tools (such as Python environments, databases, APIs, and model libraries) to perform technical tasks. The agent dynamically works out which tool is relevant for executing specific tasks based on predefined instructions.

5- Large Language Model (LLM): Determines the sequence of actions needed to achieve a specific goal, e.g. “Backtest this IRB model under a recession scenario”. In this example, the LLM would identify actual periods spanning a receding economy and uses the corresponding data to execute the model and return the results.

This architecture allows AI agents to operate like digital collaborators, fetching and processing data, visualising results, and helping to explain patterns that we may otherwise miss - all without requiring users to interact with a single line of code.

Benefits of AI Agents Across Different Banking Functions

Beyond improving information access, AI agents deliver strategic benefits through automation, broader access to analytical tools, faster decision-making, and the removal of process bottlenecks. Here’s how agentic solutions support various stakeholders:

- Front Office (Trading, Structuring, Portfolio Management): Enhance front-office functions including pre-trade analysis, data acquisition and processing, continuous market monitoring, and rapid trade execution. By integrating structured and unstructured data, ranging from market sentiment to fundamental and technical indicators, the agents allow trading and investment professionals to make faster, more informed decisions that are grounded in a holistic view of market conditions.

- Risk Modeling and Analytics Teams: Aid modelers to accelerate prototyping and calibration by offloading repetitive tasks such as parameter sweeps, benchmarking, or sensitivity runs. Agents can also assist with documentation and help iterate on design logic more efficiently, freeing up time for more complex problem solving.

- Model Risk Management (MRM): Streamline model validation through automation and a system designed to enhance efficiency and reliability. The system can independently replicate results from model execution, generate challenger models, and document testing steps, offering a transparent and auditable workflow that strengthens governance and reduces approval timelines.

- Risk Control and Regulatory Reporting: Automate aspects of stress testing and capital reporting for control functions. By having the ability to recalculate model results under different model assumptions, maintaining traceable logic, and generating consistent documentation, the agents help ensure model results align with regulatory standards.

Ensuring Trust and Quality with AI Agent Solutions: Oversight, Governance, and Guardrails

As promising as AI agents are, their deployment in risk-sensitive environments must be accompanied by robust controls. Key design considerations include:

- Security: Solutions are restricted to rely only on credible and approved AI models or models that have demonstrated high safety for institutional use. All other components are designed in Python, eliminating risks that may be posed from third-party systems and processes.

- Data Governance: Agents only access approved, secure data sources, with permissions and version control strictly enforced. Where privacy is critical, data anonymisation or summarisation techniques can be applied.

- Explainability: Transparency is ensured through well-defined workflows, step-by-step process documentation, and audit trails to help stakeholders understand how decisions are made.

- Scope Boundaries: Agents operate within clearly defined limits (e.g., executing but not creating new models). A human-in-the-loop approach is used for material-risk decisions, while lower-risk processes may be fully autonomous.

- Validation: Like any model, agents undergo rigorous testing for accuracy, consistency, and robustness, especially in edge cases. By applying the traditional “three lines of defense” model to AI agents, oversight and accountability is embedded into their lifecycle.

Conclusion

AI agents offer enormous potential to drive automation and expand access to analytical tools, especially for non-technical stakeholders. This facilitates deeper integration of business and regulatory expertise into processes across trading, reporting, and model development. When built with strong governance, robust data controls, and transparent logic, AI agents don’t just support critical workflows, they improve them.

At Zanders, we support clients in understanding how AI agents can benefit their risk management frameworks to unlock operational efficiency and expand access to advanced analytics. For more information on how Zanders can help you to utilize the power of AI agents, contact Dilbagh Kalsi (Partner) or Stanley Nwanekezie (Manager).

Cash agility plays an increasingly important role in how private equity firms manage growth, risk, and execution.

In an industry where growth is often measured in multiples, and value creation is expected to be both scalable and repeatable, operational excellence is no longer a supporting function—it’s a strategic enabler. Yet one of the most fundamental enablers of value creation remains underdeveloped across many private equity-backed businesses: the financial value chain.

This system—spanning everything from working capital and liquidity to payments, banking, and treasury technology—often determines whether a company can scale without friction, execute M&A efficiently, or respond to volatility with speed. And at the heart of that system lies a single objective: cash agility.

Why Cash Agility Matters in PE

Private equity firms have long emphasized growth acceleration, M&A, and operational leverage as core value drivers. These remain powerful levers—but they are increasingly vulnerable to delays, integration challenges, and market-driven volatility. What’s less volatile, and arguably more repeatable, is a firm’s ability to control and redeploy its own capital faster.

Cash agility describes the capability to mobilize internal liquidity—whether to seize investment opportunities, fund strategic initiatives, or manage downside risks—without unnecessary friction or reliance on external capital. It represents the intersection of visibility, control, and speed across the financial value chain.

In this sense, cash agility is about creating an institutional capability to convert operational execution into strategic flexibility.

The Financial Value Chain: A Missed Opportunity

In many portfolio companies, the financial value chain is fragmented. Processes have evolved piecemeal, often in response to tactical needs rather than strategic planning. Treasury sits apart from commercial functions. Working capital is optimized through isolated projects. Systems don’t talk to each other. Spreadsheets fill the gaps between data, decision, and executionAs a result, CFOs and PE sponsors alike struggle with basic questions:

How much cash is available across the group today? Where is it trapped? Can it be upstreamed, invested, or deployed without delay?

In our recent project with a mid-market portfolio company, the finance team had to manually consolidate bank balances from over 200 accounts across 30 entities to produce a weekly liquidity report—consuming hours of effort and often resulting in outdated insight. The company had been growing fast through M&A, but its cash visibility had not kept pace. This created real constraints when the group needed to move quickly on a bolt-on acquisition, forcing reliance on external bridge financing and delaying execution by weeks.

This scenario is common across mid-sized and even large PE-backed businesses. And while every firm understands the importance of “cash control,” few have turned that awareness into a repeatable capability.

From Fragmentation to Agility: What Good Looks Like

Building cash agility starts with aligning the components of the financial value chain into a cohesive, strategically designed operating model. This typically includes:

- Real-time cash visibility across all entities and currencies, ideally enabled through TMS/ERP integration and automated bank feeds.

- Forecasting processes linked to operational and commercial drivers, integrated with actual cash positions—offering a forward-looking, actionable view of liquidity rather than a static snapshot.

- Banking architecture designed for mobility over rigidity—supporting automated sweeps, intercompany lending, and minimal account fragmentation.

- Payment processes embedded with control but freed from manual bottlenecks—using centralized workflows, audit trails, and fraud prevention tools.

- Capex and working capital governance that prioritizes ROI, liquidity impact, and execution timing over pure budget conformance.

- Cash flow management as a continuous fitness program for liquidity—driving productivity, improving free cash flow, and delivering more sustainable performance than reactive working capital initiatives alone.

For PE investors, the ultimate test of these systems isn’t operational elegance. It’s whether they enable faster execution, more efficient capital deployment, and consistent delivery of the investment thesis.

Common Bottlenecks

Across Zanders’ private equity engagements, several recurring issues tend to slow progress toward cash agility:

- M&A Complexity: Acquisitions bring in diverse systems, bank setups, and control environments. Without a clear treasury integration playbook, complexity compounds.

- Underinvestment in Treasury: Many companies have no dedicated treasury function or treat it as a back-office necessity despite a highly positive business case. This leads to disjointed tools, lack of ownership, and reactive problem solving.

- Disconnected Systems: ERP, TMS, and bank portals often operate in isolation. Without an integrated architecture, data is delayed, duplicated, or distorted.

- Legacy Banking Landscapes: Companies often maintain outdated banking setups, including hundreds of rarely used accounts or manual payment processes that create risk and inefficiency.

- Static Forecasting: Forecasts are built manually, reviewed infrequently, and rarely integrated into daily cash decisions.

Solving these challenges requires more than a few process tweaks. It demands a step change in how financial operations are conceived, designed, and executed.

Case in Point: When Financial Architecture Drives Value

In one engagement with a global consumer technology leader generating over $40 billion in annual revenue, Zanders helped transform an inherited web of disconnected financial operations into a single, scalable treasury architecture. The company had expanded rapidly, but its cash remained fragmented across geographies and systems. We centralized liquidity into a unified control structure, redesigned the firm’s bank strategy and FX risk setup, and implemented automated cash processes through an in-house bank.

The results were transformational. Annual costs dropped by $18 million, and over $8 billion in trapped cash was unlocked for reinvestment. Treasury operations became leaner, with over $10 million in savings achieved through rationalized account structures and reduced IT system costs. Perhaps more strategically, the business freed up $17 to $35 million in withheld taxes by migrating treasury entities—capital it could now allocate to growth, innovation, or debt reduction. What had once been an operational bottleneck became a value multiplier.

Another engagement with a multinational education group revealed the power of standardization at scale. With 80+ campuses across continents and multiple ERP systems, treasury processes were fragmented and reactive. Zanders introduced a centralized target operating model, implemented a TMS integrated with the group’s ERP infrastructure, and deployed straight-through-processing to streamline reporting and control.

As a result, the company now saves $3.8 million annually, has released $1.7 million in working capital, and accumulated a projected $14 million benefit over a five-year horizon. More importantly, it has increased cash forecasting accuracy, reduced manual workload, and enhanced operational resilience—capabilities that will serve the group across future growth cycles.

Cash Agility: A Lever for Repeatability

For private equity investors, the question isn’t whether cash agility creates value. It’s whether it creates value that’s consistent, transferable, and visible to the next buyer.

Too often, value creation initiatives focus on EBITDA expansion—growth, margin improvement, pricing, procurement. These levers are critical, but they are also cyclical, competitive, and often constrained by talent or timing.

The financial value chain offers a different type of leverage. When optimized, it unlocks value that is self-funding, recurring, and resilient. Cash agility enhances decision velocity, reduces the cost of capital, and minimizes execution risk. It also makes businesses more attractive at exit by demonstrating financial discipline, liquidity control, financial risk awareness and systems maturity.

This is especially relevant in today’s environment, where LPs and acquirers scrutinize not just the numbers, but the operating backbone behind them. Clean books and good margins are important—but increasingly, buyers want to know: Can this company manage growth? Can it scale its financial systems? Can it fund itself efficiently?

These are questions cash agility helps answer.

Where Zanders Fits In

Zanders works with both PE sponsors and portfolio companies to build this capability. Our role is to serve as an external financial operating partner—focused on aligning finance execution with investment priorities.

We combine strategic thinking with hands-on implementation, delivering projects that range from working capital diagnostics to full treasury transformations. Our clients include global PE houses, mid-market sponsors, and management teams navigating carve-outs, integrations, and digital finance initiatives.

What distinguishes our approach is the focus on outcome: measurable improvements in cash visibility, efficiency, and control—not just slideware or process maps. We work with the CFO, but we work for the investor.

Cash Agility as a Strategic Outcome

Ultimately, cash agility is not an end in itself. It’s the enabler that turns capital into capability.

It allows portfolio companies to reinvest faster, fund growth internally, and respond to uncertainty with confidence. It gives CFOs the tools they need to lead, not lag, in execution. And it helps investors translate operational improvements into multiples—by building the infrastructure of repeatability.

In a market where capital is no longer cheap, and execution risk is rising, agility is not a luxury. It’s a requirement.